Screening tools in hiring: Transform your recruitment efficiency

Screening tools in hiring: Transform your recruitment efficiency

Screening tools promise to take the chaos out of tech hiring, but a common misconception sets many teams up for frustration before they even begin. The assumption is that these tools run on autopilot, automatically sorting great candidates from poor ones without much human input. Research tells a different story: ATS systems rarely auto-reject applicants, and recruiters remain central to most decisions. Understanding this reality is what separates HR teams that get results from those that get overwhelmed. Here is how to use screening tools where they actually deliver value.

Table of Contents

- What are screening tools in recruitment?

- Common types, methods, and effectiveness

- Building a robust screening pipeline: Best practices for tech hiring

- Pitfalls, fairness, and future-proofing your approach

- A new mindset for screening: Why thoughtful design trumps technology alone

- See how Testask can strengthen your recruitment process

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Screening tools are not fully automatic | Most decisions still require human judgment combined with well-designed tools. |

| Structured methods improve results | Using standardized interviews and rubrics makes hiring fairer and more predictive. |

| AI impacts efficiency and fairness | AI can outperform recruiters in predicting success but must be monitored for demographic impact. |

| Continuous benchmarking is essential | Tracking both efficiency and adverse impact keeps hiring practices both effective and ethical. |

What are screening tools in recruitment?

To understand why screening tools are often misunderstood, let’s clarify what they really are and where they operate within a tech company’s recruitment funnel.

Screening tools are software platforms, methods, or structured processes that filter job applicants as they move through different stages of your hiring pipeline. They are not a single system that handles everything. They are a collection of gates, each designed to answer a specific question about a candidate at a specific point in time.

In a tech hiring context, you might encounter screening tools at four key stages:

| Stage | Tool type | Primary function |

|---|---|---|

| Intake | Applicant tracking system (ATS) | Parse resumes, organize applications |

| Eligibility | Knockout questions, filter logic | Remove candidates who lack required qualifications |

| Assessment | Skill tests, AI evaluations | Measure technical or role-specific abilities |

| Final review | Structured interviews, scorecards | Inform final hiring decisions with consistent criteria |

Research confirms that screening happens across multiple gates, with recruiters making the majority of decisions manually rather than delegating to algorithms. This is an important distinction because it means the quality of your screening process depends heavily on the criteria, rubrics, and judgment your team brings to each gate.

Each tool in your stack serves a different purpose. Your ATS organizes and tracks. Your assessment platform measures skill. Your interview framework evaluates fit and potential. Knowing where each belongs helps you get the right information at the right time. You can find a detailed breakdown of candidate screening steps in our process guide, which walks through how to sequence these tools for maximum impact.

The core functions that screening tools perform include:

- Resume parsing: Extracting structured data from unstructured documents to make comparison easier

- Eligibility filtering: Applying hard criteria like work authorization, minimum years of experience, or required certifications

- Skill assessment: Evaluating candidates against predefined benchmarks for technical or functional roles

- Ranking and scoring: Generating relative scores to help prioritize which candidates to advance

- Interview structuring: Providing consistent question sets and scoring guides to reduce interviewer variability

None of these functions eliminate the need for recruiter judgment. They enhance it.

Common types, methods, and effectiveness

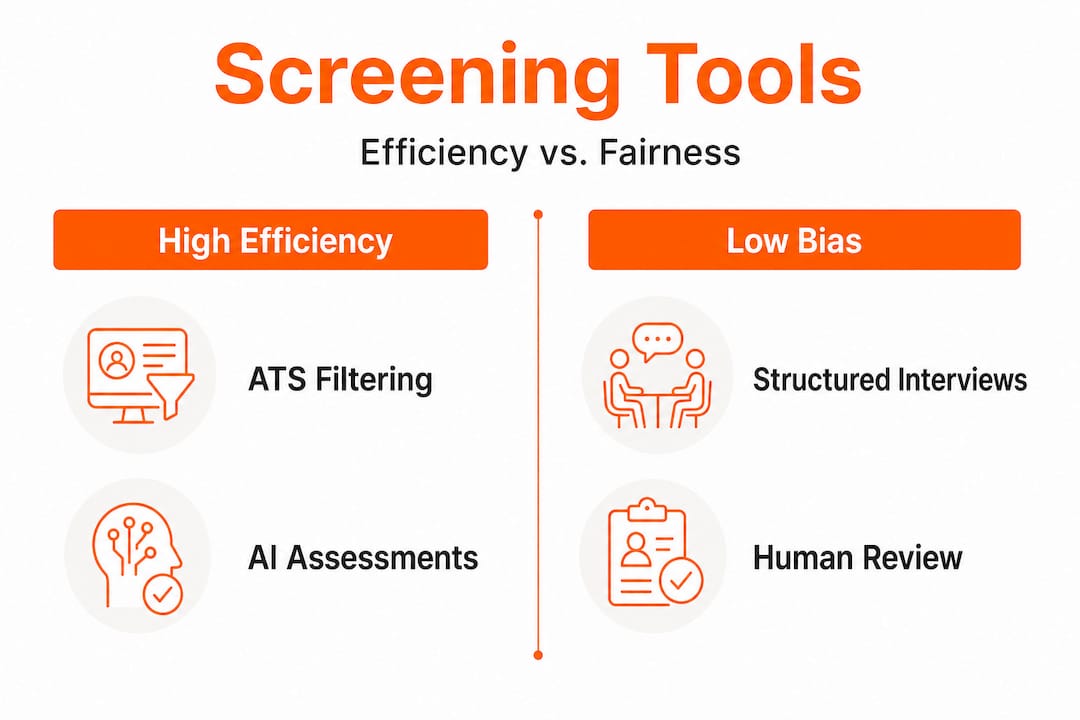

With a foundation in what screening tools are, let’s compare the most widely used methods and evaluate how they impact efficiency and diversity.

Not all screening methods are equally effective, and the evidence is clear on which approaches deliver the best results for both prediction accuracy and candidate fairness. Here is how the major categories stack up.

Applicant tracking systems

ATS platforms remain the backbone of most tech recruiting operations. They excel at organization, tracking application status, and surfacing candidates who meet basic criteria. However, their power to automatically reject candidates is widely overstated. Most ATS tools surface candidates who meet filters rather than discard those who do not, leaving the actual rejection decision to a recruiter. This means your ATS is only as good as the filter criteria you build into it.

Structured interviews

The evidence for structured interviewing is compelling. Structured interviewing improves predictive validity while also reducing adverse impact on underrepresented candidate groups. The mechanics are straightforward: every candidate answers the same questions, responses are scored against a consistent rubric, and interviewers calibrate before and after each cycle.

Structured interviews outperform unstructured ones on virtually every metric that matters. They reduce the influence of irrelevant factors like likability or personal affinity, and they make it far easier to compare candidates objectively.

AI-powered assessments

AI screening tools have moved quickly from novelty to mainstream. When configured correctly, they can outperform human evaluators on prediction accuracy for role performance. However, AI and asynchronous interviews produce significant demographic effects, meaning the choice of tool and how it is administered can change who completes applications and who advances through the funnel.

This is not a reason to avoid AI assessments. It is a reason to use them carefully, measure their impact, and build in human review checkpoints. See our deeper analysis of AI screening methods and pitfalls for a thorough look at where AI tools add value and where they require oversight.

Asynchronous video interviews

Asynchronous interviews, where candidates record responses to predetermined questions without a live interviewer present, offer convenience but come with documented tradeoffs. Research shows measurable drops in application completion rates, and these drops are not evenly distributed across candidate demographics. Before deploying asynchronous video as a screening gate, consider whether the efficiency gain is worth the potential loss of qualified candidates.

“Empirical evidence shows that asynchronous interviews and AI have large effects on who completes applications and can change outcomes across demographic groups.”

Here is a quick comparison to guide your method selection:

| Method | Efficiency | Predictive accuracy | Fairness risk |

|---|---|---|---|

| ATS filtering | High | Moderate | Low to moderate |

| Structured interviews | Moderate | High | Low |

| AI assessments | High | High | Moderate (requires monitoring) |

| Asynchronous video | High | Moderate | Moderate to high |

| Unstructured interviews | Low | Low | High |

Pro Tip: If you are adding AI assessments to your pipeline, run a parallel test for at least one hiring cycle. Compare demographic completion and advancement rates between the AI-assisted process and your previous approach. Small sample sizes make this harder, but even directional data helps you catch unintended effects early.

For HR teams exploring how to integrate these tools without introducing new risks, our expert AI screening guide walks through configuration best practices in detail. You can also review our proven candidate evaluation steps to see how these methods fit together into a cohesive process.

Building a robust screening pipeline: Best practices for tech hiring

The effectiveness of different tools depends on how you sequence and integrate them. Here is how to build an intentional and effective screening pipeline for your organization.

A well-designed screening pipeline is not a collection of independent tools. It is a coordinated sequence where each gate is aligned to the role, scored consistently, and connected to the next stage with clear handoff criteria. Here is how to build that system:

-

Define role-aligned criteria before you open the requisition. Every gate in your pipeline should filter for something specific and documented. Before a single application arrives, your team should agree on the criteria for each stage: what passes, what doesn’t, and why. This prevents criteria from shifting mid-process based on who is reviewing.

-

Build eligibility gates with precision. Hard filters should be genuinely hard. If a role requires a specific certification, make it a knockout question. If experience range is a preference rather than a requirement, do not use it as an automatic filter. Overly aggressive eligibility gates are one of the leading sources of false negatives in tech hiring, where strong candidates get screened out for irrelevant reasons.

-

Standardize scoring rubrics at every stage. Hiring as a scored pipeline with rubrics at each gate is what separates consistent decision-making from gut-feel hiring. Rubrics do not need to be complex, but they need to be shared, calibrated, and used.

-

Balance automation with human review checkpoints. Automate what is repetitive and low-stakes, like scheduling, reminder emails, and basic eligibility checks. Keep humans in the loop for any decision that involves nuanced judgment, context, or potential fairness implications.

-

Measure both efficiency and fairness metrics continuously. Track time-to-review, candidate drop-off rates by stage, and offer acceptance rates. Alongside these, monitor demographic representation at each gate. If a specific stage shows a disproportionate drop for any group, investigate before scaling.

-

Calibrate your interviewers regularly. Even structured interviews degrade over time if interviewers stop calibrating. Run brief calibration sessions at the start of each hiring cycle and revisit rubric alignment after every few hiring decisions.

Our AI decision workflow tips explain how to integrate AI tools into this structure without losing human oversight. For a full operational checklist, our recruitment checklist best practices guide covers every stage from intake to offer.

Pro Tip: Assign a “gate owner” for each stage in your pipeline. This person is responsible for the criteria, the scoring guide, and the quality of decisions at that stage. When something breaks, you know exactly where to look and who to involve.

Our assessment best practices resource covers how to configure skill tests and evaluations that align tightly with role requirements rather than generic competency models.

Pitfalls, fairness, and future-proofing your approach

No tool or method is perfect. Several well-intentioned practices can have unexpected downsides. Let’s explore what to watch for and how to build resilience.

The most common mistake in tech hiring is not choosing the wrong tool. It is over-trusting whatever tool is currently in place. When teams treat automated screening as infallible, bias hides in the process and top candidates get lost in the noise.

Here are the most critical pitfalls to watch for:

- Automation bias: Teams start deferring to algorithm outputs without questioning the logic. If your ATS ranks a candidate low, do your recruiters ever override that ranking? If not, you may have a problem.

- Criteria drift: Over time, filters and rubrics get loosened or tightened informally without documentation. This makes it nearly impossible to audit decisions or identify where fairness issues originated.

- Demographic blind spots: As research confirms, AI and asynchronous tools can reduce continuation rates and affect different demographic groups unevenly. Without explicit tracking, these effects go undetected until they become a legal or reputational issue.

- Candidate experience neglect: Efficiency gains on the recruiter side can translate to frustration on the candidate side. Long automated sequences with no human touchpoints erode your employer brand.

- Tool stagnation: The hiring landscape shifts constantly. A screening process that worked well two years ago may now filter out candidates who have built skills through non-traditional paths, bootcamps, open-source contributions, or gig work.

“AI screening tools can reduce continuation rates and affect different demographics unevenly, making ongoing measurement a necessity, not an option.”

To address these risks, build the following practices into your regular operations:

- Review pipeline data quarterly, not just when a hire falls through

- Solicit candidate feedback at multiple points in the process

- Run adverse impact analysis by gate, role type, and tool

- Update your criteria and rubrics annually to reflect what the role actually requires today

Our guide on AI recruitment challenges covers specific remedies for the most common pitfalls teams encounter when scaling AI-assisted screening.

A new mindset for screening: Why thoughtful design trumps technology alone

Here is a view that may be less popular but is worth stating directly: the technology you choose matters far less than the process you design around it.

HR teams in tech often invest significant resources in evaluating and switching tools, assuming that the right platform will fix their hiring problems. But most hiring failures are not tool failures. They are design failures. Criteria that were never clearly defined. Gates that were never properly calibrated. Interviewers who were never trained to use the scoring guide consistently.

The organizations that consistently hire well are not necessarily using the most advanced tools. They are using whatever tools they have with discipline and intentionality. They treat screening efficiency and quality as linked outcomes rather than tradeoffs.

What this means in practice is that when a new screening technology enters the market, the first question should not be “does this automate more of our process?” It should be “does this help us make better decisions, and can we measure whether it does?” Those are very different questions.

There is also a values dimension here that often gets overlooked. Candidates are not just inputs to a process. They are people making significant career decisions. A screening process that is opaque, impersonal, or inconsistent damages trust, regardless of how efficient it is internally. The best screening processes are designed with the candidate experience explicitly in mind, not as an afterthought.

The future of tech hiring belongs to teams that can combine rigorous process design with smart tool selection. Not teams that rely on one at the expense of the other.

See how Testask can strengthen your recruitment process

If you are thinking about implementing or revamping your screening process, there is a practical next step worth exploring.

Testask is an AI-powered recruitment assessment platform built specifically for tech companies that want to screen candidates faster, more fairly, and with better signal. You can generate tailored test tasks aligned to specific roles, evaluate candidate submissions with AI-assisted analysis, and collaborate with your hiring team on structured reviews. The platform supports every stage of the screening pipeline, from eligibility to final assessment, and gives you the data you need to make confident, defensible hiring decisions. Visit Testask to explore the platform or schedule a demo with the team.

Frequently asked questions

Do screening tools automatically reject most candidates?

Most applicant tracking systems do not auto-reject candidates; recruiters typically make these decisions manually based on predefined eligibility criteria rather than fully automated logic.

Which screening method best reduces bias and improves predictions?

Structured interviewing has the strongest evidence base for both raising predictive validity and reducing adverse impact compared to unstructured interview approaches.

How does AI screening affect candidate pools?

AI screening can increase prediction accuracy, but empirical research shows it can also affect demographic representation in ways that differ meaningfully from human evaluator decisions, making ongoing monitoring essential.

Does using asynchronous interviews lower application completion?

Yes, asynchronous interviews decrease applicant continuation by more than 50 percent in some studies, with the most pronounced effects observed among women applicants.

Recommended

- Candidate Screening Process Guide: Streamlined Hiring Steps | Testask Blog | testask

- Why improve candidate screening? Efficiency, quality, AI | Testask Blog | testask

- Build an effective recruitment checklist for HR success | Testask Blog | testask

- Improve candidate screening: expert guide to AI-powered hiring | Testask Blog | testask