Streamline candidate evaluation: proven steps for better hiring

Streamline candidate evaluation: proven steps for better hiring

A bad hire costs more than most teams realize. Beyond the recruiting fees and onboarding time, there’s disrupted team dynamics, lost productivity, and the very real risk of starting the whole process over again. Many HR teams and hiring managers are still running candidate evaluation on instinct and inconsistent practices, which makes great outcomes more luck than strategy. This guide walks you through exactly how to build a structured, data-driven candidate evaluation process that’s faster, fairer, and far more reliable. You’ll get practical steps, a clear look at where AI fits in, and how to keep the candidate experience strong throughout.

Table of Contents

- Why your candidate evaluation process matters

- Essential tools and prerequisites for effective evaluation

- Step-by-step guide: Structuring your candidate evaluation process

- Measuring and refining your evaluation results

- Our take: The real art is balance, not automation

- Streamline your hiring with smarter tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Structure drives fairness | Standardized interviews and scoring rubrics reduce bias and consistently identify the best candidates. |

| Balance technology and people | AI-powered assessment tools boost accuracy but require human oversight for best candidate experiences. |

| Track the right metrics | Monitor candidate satisfaction, quality of hire, and process speed for continuous hiring improvements. |

| Continuous improvement matters | Regularly update your process using feedback and results data to attract and select top performers. |

Why your candidate evaluation process matters

When evaluation is unstructured, bias creeps in fast. Interviewers default to gut feelings, feedback varies wildly between reviewers, and the people making final decisions often lack consistent data to act on. The result? Hires that look good on paper but don’t perform, and candidates who walk away frustrated regardless of the outcome.

Here are common signs your current process needs attention:

- Inconsistent feedback across interviewers for the same role

- High early turnover among recently hired employees

- Candidate confusion about next steps or timelines

- Slow decision-making that causes top candidates to accept other offers

- Low offer acceptance rates despite strong applicant pools

- Gut-feel hiring with no scoring criteria or rubrics

Structured interviews address many of these issues directly. When every candidate answers the same questions and gets scored against the same criteria, structured interviews reduce bias and improve how accurately you can predict job performance. That’s not a small improvement. That’s the difference between a repeatable hiring engine and a revolving door.

Data-driven approaches consistently outperform intuition-based ones. When you define what “good” looks like before you start screening, you stop retrofitting criteria to your favorite candidate.

Long, unclear, or poorly communicated evaluation processes don’t just frustrate candidates. They actively drive them away. Research shows that up to 89% candidate dropout can be traced back to poor evaluation experiences. If your process is slow or confusing, you’re likely losing strong applicants before you ever get to assess them.

The fix isn’t just adding more steps. It’s designing a smarter process from the start. And with a modern AI recruitment platform, you can build that foundation without reinventing your entire workflow.

Now that the challenges of ad hoc evaluation are clear, let’s discover what you need to build a better process.

Essential tools and prerequisites for effective evaluation

Before you run a single interview, you need the right infrastructure. That means the right tools, clear criteria, and internal alignment on what you’re actually looking for. SHRM recommends structured approaches, including evaluation forms, scoring rubrics, and metrics tracking, as baseline requirements for any effective hiring process.

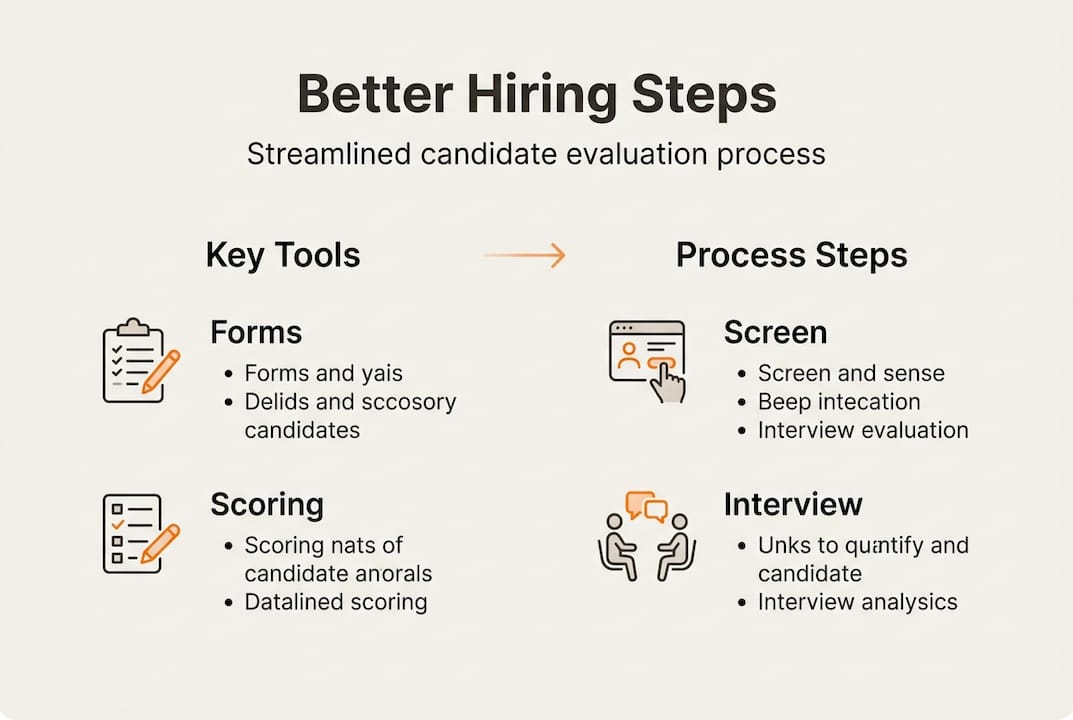

Here’s a quick overview of the core tools you should have in place:

| Tool | Purpose | Format |

|---|---|---|

| Evaluation forms | Standardize feedback collection | Digital or paper |

| Scoring rubrics | Reduce bias, align reviewers | Spreadsheet or platform |

| Interview guides | Ensure consistent questioning | Document or template |

| AI assessment platform | Automate screening, score submissions | SaaS tool |

| Candidate tracking | Monitor pipeline and status | ATS or CRM |

Defining job requirements upfront is just as important as the tools themselves. That means identifying the core competencies for the role, separating must-have skills from nice-to-have ones, and creating criteria that reviewers can actually apply consistently.

Here’s how to prepare before your first screening call:

- Align with hiring managers on role expectations and success metrics

- Clarify must-have versus nice-to-have skills in writing

- Create a structured process outline with defined stages and timelines

- Assign roles for each stage so reviewers know their responsibilities

- Build or source scoring rubrics tied to your specific competencies

Criteria set before you see any resumes are far more objective than criteria shaped by the first few candidates you review. Getting this step right protects you from anchoring bias and makes your final decisions defensible.

The AI recruitment features available in modern platforms can help you generate role-specific assessments and evaluation rubrics quickly, reducing the setup time that often causes teams to skip this step entirely.

Pro Tip: Use digital forms and automated tracking from day one. Manual spreadsheets and email threads introduce errors and make it nearly impossible to compare candidates fairly at scale.

With the right tools and groundwork, you’re ready to implement a step-by-step evaluation process.

Step-by-step guide: Structuring your candidate evaluation process

A well-structured process doesn’t have to be complicated. It needs to be consistent. Here’s a practical workflow you can adapt to your organization:

- Resume screening — Filter applications against your defined must-have criteria. Use structured criteria, not impressions.

- Initial screening call — A short, scripted conversation to confirm basic fit, availability, and interest level.

- Skills assessment — Use task-based or AI-assisted tools to evaluate role-specific competencies objectively.

- Structured interview — Conduct panel or one-on-one interviews using standardized questions and scoring rubrics.

- Team review — Aggregate reviewer scores and compare notes in a calibration session.

- Candidate ranking and scoring — Use your rubric data to rank finalists before making any verbal offers.

- Final selection and offer — Make a documented, data-backed decision.

Every step should produce a record. No verbal-only feedback. No off-the-cuff rankings.

Here’s how traditional methods compare to AI-based candidate assessment:

| Factor | Traditional method | AI-assisted method |

|---|---|---|

| Speed | Slow, manual review | Fast, automated scoring |

| Consistency | Varies by reviewer | Standardized outputs |

| Bias risk | High without structure | Reduced with calibration |

| Candidate experience | Can feel impersonal | Depends on implementation |

| Scalability | Limited | High |

The data is encouraging but also nuanced. AI-assisted assessments predict employment success effectively, yet asynchronous or overly automated interview formats can reduce applicant continuation rates if candidates feel they’re interacting with a system rather than a team.

And when it comes to structured interviews, rubrics for fairness aren’t optional. They’re what separates a defensible decision from a disputed one.

Common mistakes to avoid:

- Unclear or undefined evaluation criteria at the start

- Too many stages that slow the process and frustrate candidates

- Inconsistent feedback delivery with no timeline communicated

- Using different questions for different candidates in the same role

Pro Tip: Standardize your interview questions across all candidates for a given role and score responses immediately after each session, not hours later when memory fades.

Following the framework, it’s crucial to monitor and refine the process for continuous improvement.

Measuring and refining your evaluation results

Building a strong process is the first step. Keeping it strong requires ongoing measurement. Without data, you can’t tell whether your changes are making things better or worse.

The top metrics to track include candidate experience scores, quality of hire, time-to-fill, and interviewer consistency ratings. Each one tells you something different about where your process is working and where it’s breaking down.

Here’s how to collect and analyze them effectively:

- Send candidate experience surveys within 48 hours of each stage completion

- Track quality of hire through 30, 60, and 90-day performance reviews for new hires

- Measure time-to-fill from job open date to accepted offer

- Compare interviewer ratings across reviewers for the same candidates to spot inconsistency

- Review offer acceptance rates quarterly to assess process perception

- Analyze early turnover data to identify patterns linked to specific roles or screening steps

Organizations with structured, metrics-driven evaluation processes consistently report stronger retention and higher candidate satisfaction compared to those using ad hoc methods. That’s not surprising. When candidates feel the process is fair and well-organized, they’re more likely to accept offers and stay engaged after joining.

Refining your process means acting on the data you collect. If candidate experience scores drop at a specific stage, investigate why. If quality of hire is low for a particular role, revisit your rubric criteria. If time-to-fill keeps growing, look for steps that can be shortened or automated.

Iteration is not a sign of failure. It’s how strong hiring teams operate. The best processes aren’t built once and frozen. They evolve with your organization’s needs.

Having established a feedback loop, let’s look at what most guides miss about balancing technology and the human touch.

Our take: The real art is balance, not automation

There’s a real temptation to solve hiring problems by adding more technology. Better screening software, more automated assessments, AI-generated scorecards. And yes, these tools deliver genuine value when implemented thoughtfully.

But the organizations that build truly world-class hiring teams aren’t the ones who automate the most. They’re the ones who know exactly where human judgment is irreplaceable and protect that space deliberately.

Over-automation creates a different set of problems. Candidates who feel processed rather than considered are less likely to accept offers, even when they’re a great fit. Cultural alignment and soft skills, things like communication style, adaptability, and values fit, are still best assessed through real human interaction.

The strongest approach combines balancing AI and human judgment in a deliberate way. Use AI to remove the noise, standardize scoring, and surface the best candidates faster. Then use structured human interaction to evaluate what the data can’t fully capture.

Leaders who treat their evaluation process as a living system, adapting it based on outcomes and candidate feedback rather than just adopting the latest tool, are the ones building teams that last. That’s the differentiator worth focusing on.

Streamline your hiring with smarter tools

You now have a complete framework for building a candidate evaluation process that’s fair, consistent, and built for results. The next step is putting it into practice without spending weeks on setup.

testask gives HR teams and hiring managers the tools to do exactly that. Generate tailored test tasks, automate candidate scoring, collaborate on reviews, and track every stage of your pipeline in one place. From structured screening to AI-assisted analysis, testask reduces the friction that slows most teams down. If you’re ready to evaluate candidates faster and hire with more confidence, explore what testask can do for your team.

Frequently asked questions

What is the most effective method for evaluating candidates?

Structured interviews reduce bias and improve predictive validity, making them the most reliable evaluation method when paired with consistent scoring rubrics and defined criteria.

How can AI improve the candidate evaluation process?

AI-assisted assessments predict employment success and reduce human bias, but they work best when combined with structured human review rather than replacing it entirely.

What metrics should we track to assess our hiring process?

Track candidate experience scores, quality of hire, and time-to-fill as your core indicators, then layer in interviewer consistency and early turnover data for a fuller picture.

How can we reduce bias in our candidate evaluation?

Standardized scoring rubrics, structured interviews with consistent questions, and diverse review panels are the most effective combination for minimizing unconscious bias in hiring decisions.