Solving recruitment challenges with AI: Evidence-based strategies

Solving recruitment challenges with AI: Evidence-based strategies

Most HR teams adopted AI recruitment tools expecting faster hires, lower costs, and better candidates. Instead, many found themselves managing more complexity, not less. With 88% of HR leaders reporting no significant business value from their AI tools, the gap between expectation and reality is stark. This guide breaks down exactly why that gap exists, what the hidden risks are, and what evidence-backed strategies your team can start using right now to turn AI into a genuine hiring advantage.

Table of Contents

- Facing common recruitment challenges today

- Why AI tools fail to deliver intended results

- Bias, compliance, and candidate-side AI: Hidden pitfalls

- What the most successful teams are doing differently

- Rethinking recruitment: Why blending AI and human expertise matters most

- Take the next step: Smarter assessments for better hires

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI tools face critical gaps | Nearly half of organizations report AI recruitment tools fail to deliver expected value and efficiency. |

| Operational complexity undermines recruitment | Workflow misalignment, compliance risks, and growing bias hinder successful hires even with advanced technology. |

| Top performers prioritize human judgment | The most effective HR teams blend skills-first hiring, structured processes, and bias audits for faster, higher-quality results. |

| Candidate-side AI complicates matching | High usage of AI by applicants creates homogenized resumes and introduces cheating, making recruiter screening harder. |

| Strategic workflow redesign is essential | Blending technology with human best practices and regular audit cycles drives sustainable recruitment improvements. |

Facing common recruitment challenges today

Before diagnosing what AI is doing wrong, it helps to understand the full picture of what HR teams are actually dealing with. The challenges are not new, but many have worsened despite technology investment.

Here are the most critical obstacles your team is likely navigating:

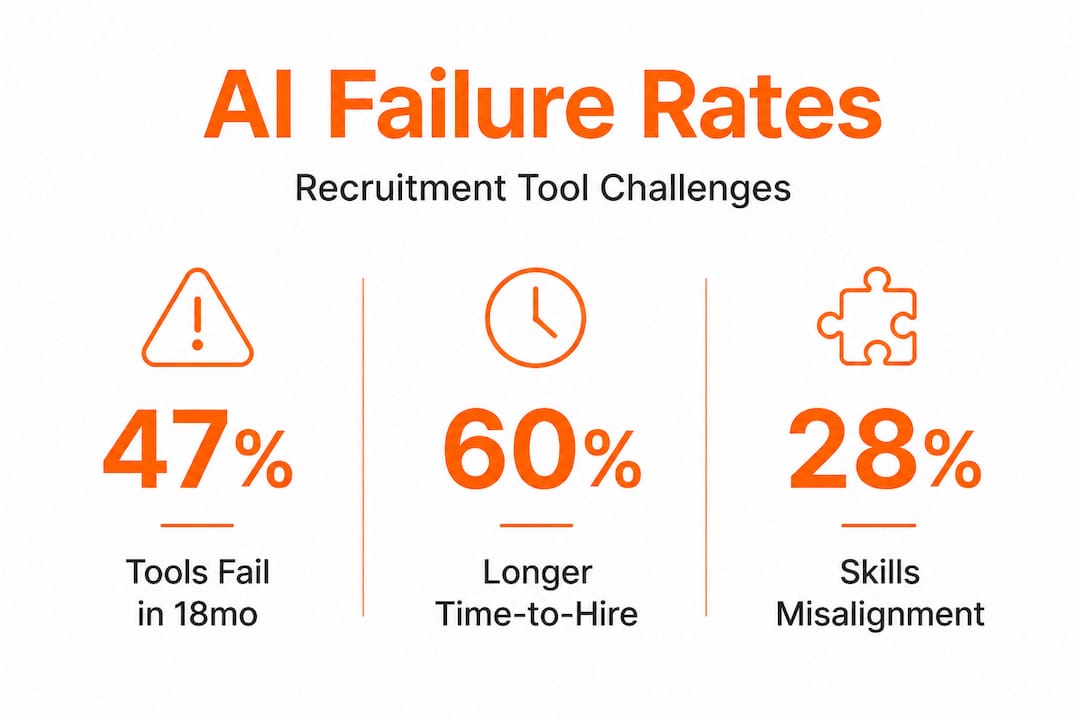

- Increased time-to-hire: 60% of organizations saw time-to-hire increase in 2025, not decrease.

- Skills misalignment: 28% of HR teams cite a persistent gap between candidate skills and job requirements.

- Lack of qualified applicants: Another 28% report too few qualified candidates applying in the first place.

- Scheduling bottlenecks: Interview coordination alone consumes 38% of recruiter time on average.

- Ghosting: 41% of organizations report candidate ghosting as a significant barrier to completing hires.

The financial picture is equally concerning. Cost-per-hire is up 113% since 2017, and 21% since 2022 alone. Average time-to-fill sits at roughly 1.5 months. Meanwhile, 69% of organizations report difficulty filling full-time roles, driven by low applicant volumes (51%), intense market competition (50%), and the ghosting problem mentioned above.

“Scheduling bottlenecks alone consume more than a third of a recruiter’s available time. That’s not an AI problem. That’s a workflow problem that AI should be solving.”

| Challenge | % Organizations Affected |

|---|---|

| Increased time-to-hire | 60% |

| Skills misalignment | 28% |

| Lack of qualified candidates | 28% |

| Scheduling bottlenecks | 38% (of recruiter time) |

| Ghosting | 41% |

| Difficulty filling full-time roles | 69% |

What makes this situation more frustrating is that most organizations have already invested in technology to address these exact issues. The problem isn’t a lack of tools. It’s that the tools aren’t working as expected. Starting with a solid recruitment checklist can help teams identify which workflow gaps technology is failing to address.

Why AI tools fail to deliver intended results

Here’s where the story gets uncomfortable. The failure rate for AI recruitment tools is not a fringe issue. It’s the norm.

47% of organizations report that their AI tools failed to meet expectations within 18 months of implementation. The root causes follow a consistent pattern: misunderstood expectations, poor integration with existing workflows, and misalignment between what the tool does and what the team actually needs.

A major contributor to this failure is what Gartner survey insights describe as a lack of work redesign. Organizations install AI tools on top of broken or outdated processes, expecting the technology to compensate. It rarely does.

| AI Tool Promise | Common Reality |

|---|---|

| Faster screening | More time reviewing AI errors and edge cases |

| Reduced bias | New algorithmic bias from flawed training data |

| Better candidate matching | Lower accuracy when candidate AI use inflates qualifications |

| Cost savings | Higher operational costs from tool management |

| Compliance automation | Compliance gaps from model drift and outdated rules |

Beyond expectation gaps, AI tools struggle with edge cases, peak application volumes, incomplete submissions, and regulatory compliance changes. Model drift, where an AI’s performance degrades over time as the real-world data distribution shifts, is especially problematic. A tool calibrated for one hiring cycle may perform very differently six months later with no obvious warning signs.

The result: operational complexity often increases rather than decreases after AI adoption.

Pro Tip: Before adopting any AI recruitment tool, map your existing workflow step by step and identify the two or three highest-friction points. Deploy AI specifically to those points first, not across the entire pipeline. This targeted approach is far more likely to generate measurable value.

Following AI recruitment best practices during implementation is essential. You can also browse recruitment assessment resources to find practical frameworks for integrating new tools without compounding existing problems.

Bias, compliance, and candidate-side AI: Hidden pitfalls

Beyond operational failures, a separate category of risk is emerging that many HR teams are underprepared for: legal exposure and ethical complexity.

Understanding algorithmic bias

Bias in AI recruiting does not always look like obvious discrimination. It often shows up as proxy variables in your training data. An AI model trained on historical hiring decisions may learn that candidates from certain schools, zip codes, or with specific name patterns got hired more often. The model then replicates those patterns, quietly, at scale.

Key sources of algorithmic bias include:

- Historical data bias: Models trained on past hires reflect past human bias

- Opaque scoring: When recruiters cannot explain why a candidate scored low, they cannot catch errors

- Proxy variables: Names, educational institutions, and location data can encode protected characteristics

- Lack of explainability: Black-box models make bias auditing extremely difficult

Mitigating bias effectively requires a combination of the EEOC four-fifths rule, structured interviews, and continuous audits at regular intervals. It is not a one-time fix.

Compliance mandates are tightening

NYC Local Law 144 requires annual independent bias audits for any AI tool used in hiring decisions in New York City. The EU AI Act classifies recruitment AI as high-risk, mandating transparency, documentation, and human oversight. Legal risks are rising with AI opacity and scale, and empirical testing using the four-fifths rule is now considered essential for compliance.

The consequences of non-compliance are not theoretical. Regulatory fines, civil litigation, and reputational damage are all on the table for organizations that cannot demonstrate they have audited their AI tools for discriminatory impact.

“Compliance is not optional. HR teams that cannot explain how their AI scoring works are already behind on their legal obligations.”

Candidate-side AI is complicating everything

Here’s the challenge no one talks about enough: candidates are using AI too. Between 40% and 80% of applicants are now using AI tools to write resumes, tailor cover letters, and in some cases, complete online assessments. This creates several serious problems for your screening process.

Pro Tip: Design screening tasks that require work output reflecting real job conditions rather than theoretical answers. Tasks graded on process quality, not just final output, are far harder to fake with AI.

When resumes become homogenized by AI generation, your screening tools lose accuracy because they were calibrated on diverse human-written content. When assessment cheating becomes widespread, your data on candidate capability becomes unreliable. Deepfake video risks in remote interview settings add another layer of verification complexity. Smart teams are addressing this through candidate screening pitfalls awareness and improving screening quality through task-based, real-work assessments that are harder to game.

What the most successful teams are doing differently

Given all these challenges and risks, what separates organizations that hire well from those that stay stuck? The data points to a specific profile.

SHRM research identifies a group called “Talent Architects,” defined as the top 20% of recruiting teams by performance. Their results are measurable: Talent Architects fill roles in an average of 42 days compared to 47 days for typical teams. That 5-day advantage compounds dramatically at scale. For an organization filling 200 roles per year, that’s 1,000 days of cumulative time saved.

What makes them different? According to SHRM quarterly best practices, it comes down to five consistent behaviors:

- Skills-first hiring: They define roles by the capabilities required to succeed, not by credential proxies like degree requirements or years of experience. This widens the candidate pool while improving fit.

- Strategic alignment: Talent Architects involve recruiters in workforce planning before roles open. They are not just filling vacancies; they are anticipating hiring needs based on business strategy.

- Human judgment at critical moments: They use AI to automate administrative tasks and surface candidates, but reserve human judgment for evaluation, culture fit assessment, and final selection decisions.

- Bias audits as standard practice: They do not audit when a problem emerges. They audit continuously, treating bias review as a routine quality control step, not an incident response.

- Workflow integration over tool adoption: Before deploying any new technology, they redesign the workflow it will support. The tool fits the process, not the other way around.

A practical framework you can apply now

If you are rebuilding your recruitment approach, consider this evidence-based sequence. Start by auditing your current workflow to find where time actually disappears. Then define role requirements in terms of skills and outcomes. Deploy AI specifically for resume parsing, scheduling, and initial screening triage. Use structured, work-sample assessments to evaluate real capability rather than relying on AI matching scores alone. Integrate bias audits quarterly, and assign a human reviewer to all final-stage decisions.

Tracking hiring analytics strategies throughout this process gives you the data to continuously improve. You cannot fix what you cannot measure, and Talent Architects measure everything.

Key statistic: The top 20% of recruiting teams fill roles 5 days faster on average. At scale, that advantage is not marginal. It is a genuine competitive differentiator in talent acquisition.

The pattern here is clear. Success does not come from automating more. It comes from being more intentional about where and how you deploy automation, and from maintaining strong human involvement at every high-stakes decision point.

Rethinking recruitment: Why blending AI and human expertise matters most

The prevailing narrative in HR technology says that more AI means better outcomes. The evidence does not support that claim. What the data actually shows is that pure automation, applied without workflow redesign or human oversight, tends to entrench existing problems rather than solve them.

Here is the insight that often gets missed: AI tools are very good at scale and consistency, and very poor at nuance and novel situations. Most hiring decisions involve nuance. A candidate who looks weak on paper but demonstrates exceptional problem-solving in a real work task is a nuance your AI ranking system will frequently get wrong. A candidate who scores perfectly on an automated screen but lacks collaborative instincts is another.

The most effective recruitment operations we observe are not the ones with the most AI features. They are the ones that have genuinely redesigned their workflows to use human expertise where it matters most, while automating the work that does not require judgment. That means automating scheduling, parsing, and initial triage. It means using AI to surface patterns in your hiring data and flag potential bias. But it also means keeping humans fully engaged in evaluation, assessment design, and final decision-making.

The effective recruitment checklist concept is useful here. Not because checklists are bureaucratic, but because they force you to define which steps require human judgment before you start automating. That intentionality is what separates high-performing teams from those chasing tool adoption for its own sake.

Continuous improvement matters here too. Bias audits, pipeline analytics, and regular process reviews are not overhead. They are how you build a recruitment operation that gets better over time rather than degrading quietly as models drift and market conditions shift. Resilience in hiring comes from adaptive processes, not from any single technology.

Take the next step: Smarter assessments for better hires

The evidence is clear: workflow redesign, skills-based hiring, and real-work assessments consistently outperform full automation alone. The next question is how you actually implement those strategies at scale without adding administrative burden to your team.

testask is built specifically for this challenge. The AI recruitment platform generates tailored test tasks for any role, evaluates candidate submissions with AI-assisted analysis, and enables your team to collaborate on reviews in one place. You get faster, more consistent screening without sacrificing the human judgment that makes great hiring possible. Explore the full range of tools and frameworks on the recruitment best practices blog to find strategies you can put to work immediately. Better hires start with better assessments.

Frequently asked questions

What are the top three recruitment challenges companies face with AI?

The main challenges are increased time-to-hire, rising cost-per-hire, and operational complexity caused by deploying AI tools without redesigning the underlying workflows they are meant to support.

How can HR leaders mitigate bias in AI-driven hiring?

Mitigation requires a structured approach: apply the EEOC four-fifths rule regularly, implement structured interviews at every evaluation stage, and schedule ongoing bias audits rather than treating them as a one-time compliance check.

How are candidate AI tools affecting recruitment accuracy?

Between 40% and 80% of applicants now use AI to generate resumes and complete assessments, which homogenizes applications, reduces screening signal quality, and undermines the matching accuracy your AI tools were calibrated to deliver.

What makes Talent Architects more successful at hiring?

They combine AI automation with skills-first hiring, strategic workforce planning, and human decision-making at key evaluation points, which is why Talent Architects fill roles in 42 days versus 47 days for average teams.

How should HR teams balance human and AI input?

HR teams should prioritize workflow redesign before any tool adoption, run bias audits regularly, and keep human reviewers responsible for all final hiring decisions while using AI for administrative tasks and pattern recognition.

Recommended

- AI recruitment: faster, smarter hiring for HR leaders | Testask Blog | testask

- Top talent acquisition tips for smarter hiring in 2026 | Testask Blog | testask

- AI candidate screening: Methods, pitfalls, and best practices | Testask Blog | testask

- Why improve candidate screening? Efficiency, quality, AI | Testask Blog | testask