Revamp your hiring decision workflow with AI

Revamp your hiring decision workflow with AI

Hiring the right person is hard enough without your process working against you. Many HR teams still juggle spreadsheets, manual email chains, and inconsistent screening criteria that slow everything down and introduce bias before a single interview begins. AI orchestrates sourcing, screening, scheduling while keeping humans in control of the decisions that truly matter, and that shift is changing how the best recruitment teams operate. This guide walks you through building a structured, AI-enhanced hiring decision workflow from the ground up, covering every stage from sourcing to final offer with concrete steps and practical guardrails.

Table of Contents

- Understand the AI-driven hiring workflow

- Essential prerequisites for a successful AI hiring workflow

- Step-by-step guide to executing your AI-powered workflow

- Avoiding common pitfalls and verifying fairness

- Our perspective: Why smart governance is the real difference-maker

- Enhance your hiring workflow with Testask

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Balance AI and human roles | Let AI handle repetitious tasks while humans focus on critical decision points. |

| Set up strong governance | Governance, clear ownership, and piloting create long-term workflow success. |

| Monitor fairness continuously | Use tools like the four-fifths rule to detect and correct any bias or disparities. |

| Prioritize candidate transparency | Disclose AI use and offer appeals to foster trust and compliance. |

| Iterate for improvement | Regularly review stages, gather data, and refine your workflow for better results. |

Understand the AI-driven hiring workflow

Building upon the introduction’s overview of workflow challenges, let’s break down how an AI-powered hiring decision process is structured.

An AI-driven hiring workflow is not a replacement for human judgment. It is a system that assigns each task to whoever handles it best. Repetitive, high-volume work goes to AI. Nuanced, relationship-driven decisions stay with your team. The result is a process that moves faster, stays more consistent, and gives your hiring managers more time to focus on what they actually do best: assessing fit and building relationships.

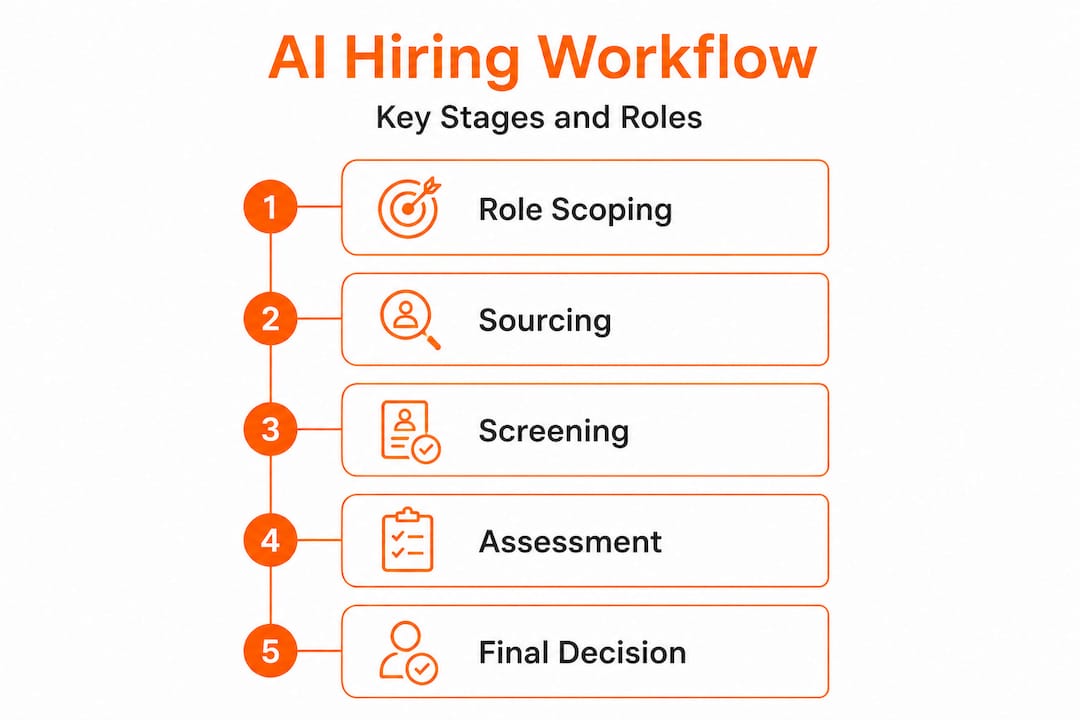

Here is how the typical workflow maps out:

| Stage | AI tasks | Human tasks |

|---|---|---|

| Role scoping | Suggests job description language and required skills | Defines actual role needs and organizational fit |

| Sourcing | Scans resume databases, job boards, and LinkedIn | Reviews shortlists and approves sourcing criteria |

| Screening | Filters applications against criteria, scores resumes | Reviews edge cases, ensures criteria are unbiased |

| Assessment | Delivers and scores skills tests automatically | Reviews borderline results, adjusts task difficulty |

| Scheduling | Sends automated calendar invites, manages availability | Confirms final interview panels and timing |

| Interviewing | Transcribes and summarizes interview responses | Leads conversations, evaluates culture and intent |

| Final decision | Aggregates scores and surfaces ranked comparisons | Makes the offer decision, weighs all context |

This clean division of labor has a real impact on hiring speed and quality. Faster, smarter AI recruitment teams consistently reduce time-to-fill without sacrificing the quality of the candidates they hire.

A few key principles hold this workflow together:

- Automation handles volume. When you receive 500 applications for a single role, AI screening prevents your team from burning out before they even reach qualified candidates.

- Humans handle context. A candidate with a non-linear career path or unconventional background may not score well on automated filters but could be exactly the right hire. Keep human review in the loop for those edge cases.

- Consistency reduces bias. When every candidate moves through the same structured steps, the subjective variation that drives unintentional discrimination decreases significantly.

“AI-driven hiring decision workflows typically involve AI handling initial sourcing, screening, and scheduling, with human oversight for interviews, final decisions, and nuanced judgments.” — Everworker

Understanding this structure upfront saves you from one of the most expensive mistakes in AI adoption: deploying AI tools in isolation without thinking about how they connect to the rest of your workflow.

Essential prerequisites for a successful AI hiring workflow

Once you understand the flow, it is vital to lay the groundwork for reliable and responsible AI integration.

Too many organizations skip directly to purchasing software. They sign contracts, configure tools, and launch campaigns before addressing the foundational questions that determine whether the whole system holds. Then they wonder why adoption stalls or why their metrics look worse after implementation than before.

Before you scale anything, these foundations must be in place:

- Executive sponsorship. AI hiring workflows require process changes across recruiting, HR ops, and sometimes legal. Without leadership buy-in, you will hit roadblocks at every cross-functional handoff.

- Clear ownership. Designate a specific person or team responsible for the workflow’s performance, fairness monitoring, and iteration. Shared ownership often means no one owns it.

- Pilot programs. Start with one role type or one business unit. Measure outcomes, gather recruiter feedback, and identify friction points before rolling out organization-wide.

- Data baselines. Know your current time-to-fill, offer acceptance rate, and quality-of-hire metrics before you change anything. You cannot prove improvement without a benchmark.

- Candidate communication protocols. Candidates must know AI is involved in their evaluation. Disclosing AI use and providing appeals is not just an ethical practice. It is becoming a legal requirement in multiple jurisdictions.

Data analytics for hiring can help you establish those baselines quickly and make the case internally for investment in AI tooling.

Pro Tip: Bring your legal and compliance teams into the workflow design conversation early, not after the tools are already running. Laws around AI in hiring are evolving fast, and retrofitting compliance is far more costly than building it in from the start.

Prioritizing governance by establishing ownership, pilot programs, and metrics baselines before scaling is consistently the factor that separates teams who get results from those who get frustration. Governance is not overhead. It is the scaffolding that makes everything else work.

A critical but often overlooked element is your appeals mechanism. If a candidate believes AI unfairly screened them out, they need a clear path to request human review. This protects candidates, reduces legal exposure, and builds the kind of institutional trust that makes your employer brand stronger over time.

Step-by-step guide to executing your AI-powered workflow

With groundwork set, here is how to put an AI-powered hiring workflow into action, from sourcing to final offers.

-

Define the role with precision. Before any AI tool touches your pipeline, make sure your job description is built around measurable skills and outcomes, not vague requirements like “team player” or “self-starter.” Use AI to suggest competency-based criteria, then have your hiring manager validate them.

-

Activate AI-assisted sourcing. Deploy your ATS’s AI sourcing features or a dedicated sourcing tool to identify candidates matching your criteria across job boards, databases, and social platforms. Set inclusion parameters that favor skill signals over demographic proxies.

-

Run structured skills assessments. Before moving anyone to an interview, use skills-based test tasks to create a fair, objective signal for each candidate. Streamlined screening steps like this reduce the volume of unqualified candidates reaching your interview stage by a significant margin.

-

Automate scheduling. Once candidates pass assessment thresholds, trigger automated calendar scheduling. This alone removes an average of two to four days from the typical hiring timeline, depending on panel size.

-

Conduct structured interviews. Provide interviewers with AI-generated candidate summaries and consistent question sets. This reduces the chances of a strong interviewer’s personal rapport or a weak interviewer’s bias driving the outcome.

-

Aggregate and compare scores. Use your platform to pull together assessment scores, interview evaluations, and hiring manager notes into a single view. AI-ranked comparisons help your team see relative candidate strength without losing the human context behind each score.

-

Make the final decision with full context. Your hiring manager reviews the aggregated data and makes the call. Document the rationale. This step remains irreducibly human, and that is by design.

Here is how AI-enhanced execution compares to traditional approaches at each stage:

| Stage | Classic approach | AI-enhanced approach |

|---|---|---|

| Sourcing | Recruiter manually searches and posts | AI scans multiple sources simultaneously |

| Screening | Recruiter reviews every application | AI scores and ranks, human reviews top candidates |

| Assessment | Phone screen only | Structured skills tasks with AI-scored results |

| Scheduling | Back-and-forth email chains | Automated scheduling triggered by pass/fail |

| Interview prep | Inconsistent per interviewer | AI-generated summaries and standardized questions |

| Decision support | Notes and gut feeling | Aggregated scores with documented rationale |

AI talent matching strategies have evolved significantly, and the best implementations now combine behavioral pattern recognition with structured task performance to surface candidates who would otherwise be filtered out too early.

Pro Tip: The biggest bottleneck in most AI hiring workflows is not the technology. It is the handoff between stages. Map out who triggers each transition, what the acceptance criteria are, and what happens when a candidate does not clearly fit into either the pass or fail category. Ambiguity at handoff points is where timelines collapse.

AI orchestrates ATS, sourcing, and scheduling tasks most effectively when each stage has clear exit criteria. Vague thresholds produce inconsistent outcomes, no matter how sophisticated the underlying model.

Avoiding common pitfalls and verifying fairness

Even with great execution, many hiring workflows stumble. Here is how to avoid the common traps and keep the process fair.

The number one mistake is treating fairness as a setup task rather than an ongoing practice. You configure the filters, run a few test searches, confirm the results look reasonable, and move on. But AI models drift. Criteria that were unbiased at launch can gradually skew when candidate pools shift or when hiring volume creates subtle feedback loops.

Here are the most common pitfalls to watch for actively:

- Unchecked algorithmic bias. AI models trained on historical hiring data can replicate the biases already baked into past decisions. If your previous hires skewed toward a particular demographic, your model may de-prioritize candidates who do not match that pattern.

- Black-box decisions. If your team cannot explain why a candidate was rejected, you have a compliance and trust problem. Every AI-driven screening step needs an auditable rationale.

- Poor candidate transparency. Pitfalls and best practices research shows that candidates who feel blindsided by AI involvement are far more likely to drop out of the process or share negative experiences publicly.

- Metric complacency. Hitting your time-to-fill target while quietly degrading quality-of-hire is a real risk when AI optimizes for the metrics you measure and ignores the ones you do not.

- Stage-level blind spots. Aggregate pass rates can look fair while individual stages quietly filter out protected groups. Track passage rates at every single stage, not just end-to-end.

“Fairness metrics like the four-fifths rule are essential. Monitor stage-by-stage pass rates to detect disparities early.” — Everworker AI Bias Mitigation Playbook

The four-fifths rule (also known as the 80% rule) is a practical compliance benchmark from the U.S. Equal Employment Opportunity Commission. It states that the selection rate for any protected group should be at least 80% of the rate for the group with the highest selection rate. If your AI screening passes 60% of white male applicants but only 40% of female applicants, that is a red flag worth investigating immediately.

Warning signs of workflow drift include:

- A sudden shift in the demographic composition of candidates reaching interview stages

- Increasing candidate drop-off at a specific automated step

- Hiring manager override rates trending upward on AI recommendations

Improving screening efficiency and quality with AI does not mean handing the wheel entirely to an algorithm. It means pairing automation with consistent human review cycles so you catch problems before they compound.

Schedule a monthly audit of your stage-by-stage pass rates. Make it a standing agenda item, not a reactive investigation.

Our perspective: Why smart governance is the real difference-maker

All technical details aside, real-world success reflects something deeper. Let us look at what truly sets resilient teams apart.

Here is an uncomfortable truth: most AI hiring workflow failures are not technology failures. They are governance failures. We have seen organizations invest heavily in sophisticated AI tooling, train their teams, and still see their hiring outcomes stagnate or worsen. In almost every case, the core issue was not the algorithm. It was the absence of clear ownership, documented criteria, and a feedback loop that connected outcomes back to process design.

When teams skip governance and jump straight to tech, three things reliably happen. First, the AI optimizes for whatever metrics are easiest to measure, not necessarily the ones that predict long-term performance. Second, no one is accountable when results look off, because ownership was never formally assigned. Third, recruiters quietly work around the AI tools because the workflow was not designed with their input and does not reflect how they actually make decisions.

The organizations that get this right share a few consistent behaviors. They pilot before they scale. They appoint a named owner for workflow performance. They build feedback mechanisms that bring hiring manager input back into the model calibration process. And they treat candidate transparency as a value, not just a compliance checkbox.

Prioritizing governance by establishing ownership, pilots, and metrics baselines is where we consistently see the clearest line between teams who build durable, high-performing hiring systems and teams who spend months chasing tools that never quite deliver.

Hiring assessment best practices reinforce this point from the assessment side: structure and rigor in how you design and review evaluations matters more than the sophistication of the scoring model.

AI is a powerful accelerant. But what it accelerates is your existing process, for better or worse. Invest in the process first.

Enhance your hiring workflow with Testask

Ready to put these insights into practice? The principles in this guide, structured workflow, skills-based assessment, fairness monitoring, and candidate transparency, are exactly what the Testask recruitment platform is built to support.

Testask helps HR teams generate tailored skills test tasks for any role, automatically score candidate submissions, and collaborate on reviews in one unified workspace. You get AI-assisted analysis that surfaces the strongest candidates faster, without sacrificing the human judgment that final hiring decisions require. Whether you are running a pilot program or scaling a full AI-powered hiring workflow, Testask gives your team the tools to move efficiently and stay accountable. Explore Testask today and see how quickly structured, AI-enhanced assessment transforms your pipeline quality.

Frequently asked questions

What is the role of AI versus HR in hiring decision workflows?

AI handles sourcing, screening, and scheduling at scale, while HR professionals provide oversight, exercise contextual judgment, and make all final hiring decisions.

How can fairness be monitored in an AI-powered workflow?

Apply the four-fifths rule to stage-by-stage pass rates across your entire pipeline to detect disparities before they compound into systemic bias.

Why does candidate transparency matter in AI hiring?

Disclosing AI use and providing appeals builds candidate trust, protects your employer brand, and increasingly aligns with emerging legal requirements around automated decision-making.

What is one common mistake when adopting AI in hiring?

Skipping governance setup is the most costly mistake. Establish ownership, pilots, and metrics baselines before you scale any AI hiring tool across your organization.

How should HR teams prepare for AI-driven workflow implementation?

Start with a focused pilot program, define who owns workflow performance, set measurable baselines for your current hiring metrics, and communicate the changes clearly to all stakeholders involved in the hiring process.