Improve candidate screening: expert guide to AI-powered hiring

Improve candidate screening: expert guide to AI-powered hiring

Many organizations assume that deploying an AI tool automatically solves their candidate screening challenges. The reality is more nuanced. Without structured frameworks, standardized scoring, and calibrated evaluation criteria, automation can amplify inconsistency rather than eliminate it. This guide breaks down what effective candidate screening actually requires, how modern AI-powered tools fit into that picture, and what practical frameworks HR teams need to build consistency, reduce bias, and improve quality-of-hire at every stage of the funnel.

Table of Contents

- What is candidate screening?

- Structured screening frameworks: Why they matter

- AI-powered candidate screening: Benefits and risks

- Benchmarking candidate screening effectiveness

- Ethical and compliance considerations for AI screening

- Our take: Why structure matters more than software

- Enhance your screening process with AI-powered tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Structure drives success | Structured approaches are proven to increase consistency and fairness in candidate screening. |

| AI boosts efficiency | AI-powered tools automate large-scale screening, but must be governed for quality and bias. |

| Empirical benchmarking matters | Evaluate screening outcomes with rank-aware metrics and link results to hiring and retention rates. |

| Compliance is essential | Ongoing ethical and legal monitoring is critical for any AI-driven assessment process. |

What is candidate screening?

Candidate screening is the structured process of evaluating job applicants to determine which individuals are best suited for further assessment or a hiring decision. For HR professionals, it serves as the critical filter between a large applicant pool and a manageable shortlist of genuinely qualified candidates.

The goals of modern candidate screening go well beyond simple resume review. The candidate screening process encompasses several interconnected objectives:

- Improve quality-of-hire by surfacing candidates whose skills and experience genuinely match role requirements

- Reduce bias through standardized criteria applied consistently across every applicant

- Ensure compliance with employment law and regional data privacy regulations

- Streamline HR workflows so your team spends time on high-value evaluation, not administrative triage

- Generate measurable data that informs future hiring decisions and process improvements

Modern screening has evolved well beyond the manual paper resume stack. Today, the process combines automated tools for initial triage, skills-based assessments, structured video and phone screens, and human-reviewed scoring rubrics. What separates effective screening from ineffective screening is not which tools you use. It is whether you apply them within a structured, documented framework.

“Structured assessment approaches are intended to improve consistency by using the same questions and standardized scoring/rubrics for each candidate,” according to the Google re:Work Interview Guide.

This principle holds regardless of whether the initial screening is done by a human recruiter or an AI-powered platform. Consistency requires structure. Structure requires intentional design.

Structured screening frameworks: Why they matter

Understanding the value of structured frameworks is one thing. Building them into your workflow is another. A structured screening framework consists of three core components: role-relevant evaluation criteria, standardized scoring rubrics, and trained interviewers or reviewers who apply those rubrics consistently.

Here is how structured and unstructured approaches compare across critical dimensions:

| Dimension | Structured screening | Unstructured screening |

|---|---|---|

| Consistency | Same criteria applied to every candidate | Varies by recruiter or session |

| Bias risk | Lower, with deliberate rubric design | Higher, relies on intuition |

| Defensibility | Documented, auditable | Difficult to justify or review |

| Interviewer training | Required and formalized | Minimal or informal |

| Measurability | Clear scoring data for comparison | Subjective impressions |

| Scalability | Scales with AI tools and workflows | Breaks down at volume |

The distinction matters enormously in practice. When a recruiter evaluates 200 applicants without standardized criteria, their judgment shifts based on fatigue, recency bias, and contrast effects. The 150th candidate is assessed differently than the 10th, even if their qualifications are identical. Structured frameworks neutralize these effects by anchoring evaluation to predefined criteria.

Streamlined evaluation steps consistently reinforce that structure is not about rigidity. It is about accountability. You can still exercise judgment and nuance. The framework ensures that judgment is applied fairly and that your decisions are explainable.

The assessment best practices most effective organizations follow include regular calibration sessions where interviewers review scored responses together, identify drift in criteria application, and realign before the next hiring cycle. That kind of ongoing calibration is what separates teams that improve over time from those that repeat the same mistakes.

Critically, as the Google re:Work Interview Guide notes, AI tools do not create structure on their own. You still need predefined criteria, scoring rubrics, and calibrated human evaluation to ensure consistency. Software automates execution. Humans design the structure that makes execution meaningful.

Pro Tip: Before selecting any AI screening tool, document your current evaluation criteria in writing. If you cannot articulate what “good” looks like for a given role, no tool will figure that out for you. Start with the criteria, then choose the technology that helps you apply it efficiently.

AI-powered candidate screening: Benefits and risks

With structured frameworks in place, AI-powered screening tools offer real efficiency gains. They automate initial candidate sorting, apply pattern matching across large applicant pools, generate ranked shortlists, and flag candidates who meet or miss specific criteria at scale. For high-volume recruitment, this is a genuine operational advantage.

The concrete benefits include:

- Speed: AI tools screen hundreds of applications in the time it takes a recruiter to review a dozen manually

- Scalability: Consistent criteria application across thousands of candidates without fatigue

- Data generation: Structured scoring data that feeds analytics and benchmarking

- Reduced administrative load: Recruiters focus on judgment-heavy stages rather than initial triage

- Faster shortlisting: Hiring managers receive ranked candidate lists with supporting evidence, not just raw resumes

But these benefits come with real risks. AI-powered candidate screening is increasingly used to assist with large-volume triage, but it must be governed to avoid quality, bias, privacy, and transparency failures.

The risk categories HR teams must actively manage include:

- Algorithmic bias: Models trained on historical hiring data can encode past biases, systematically disadvantaging candidates from certain backgrounds

- Quality signal loss: Reducing candidates to ranked scores can strip out contextual signals that human reviewers would catch

- Privacy exposure: Automated collection and analysis of candidate data creates compliance obligations under GDPR, CCPA, and regional equivalents

- Transparency gaps: Candidates and regulators increasingly expect explainability in how screening decisions are made

AI recruitment tips for managing these risks center on one principle: treat AI as an accelerant for your structured process, not a replacement for it. The model does not know what matters for this role, in this team, at this stage of your company’s growth. You do.

Pro Tip: Run a bias audit on your AI screening tool before full deployment. Apply it to a retrospective batch of past hires and rejections. If the model’s shortlist differs systematically by gender, age, or ethnicity, that is a governance problem that requires immediate attention before scaling.

Data analytics insights reinforce that monitoring AI performance over time is not optional. Bias patterns can emerge or worsen as the applicant pool or job market shifts, even if the model performed acceptably at launch.

Benchmarking candidate screening effectiveness

Running a screening process without measuring its effectiveness is like optimizing a funnel you cannot see. Benchmarking gives you the empirical foundation to evaluate what is working, identify failure points, and justify process investments to leadership.

Effective benchmarking connects two layers of measurement: model-level performance metrics and downstream hiring outcomes.

| Metric type | Examples | What it measures |

|---|---|---|

| Rank-aware accuracy | Precision@k, NDCG | How well the tool identifies top candidates |

| Pass-through rates | Screen-to-interview rate | Funnel efficiency and filter calibration |

| Offer conversion | Interview-to-offer rate | Quality of shortlisted candidates |

| Retention correlation | 90-day, 1-year retention | Long-term quality-of-hire validation |

| Time-to-screen | Hours per screened candidate | Operational efficiency gains |

Evaluating ranking and screening tools requires rank-aware metrics and linking to hiring outcomes, not only model-level accuracy. This is a critical nuance. A model can appear highly accurate on internal validation tests while consistently missing the candidates who actually succeed in the role.

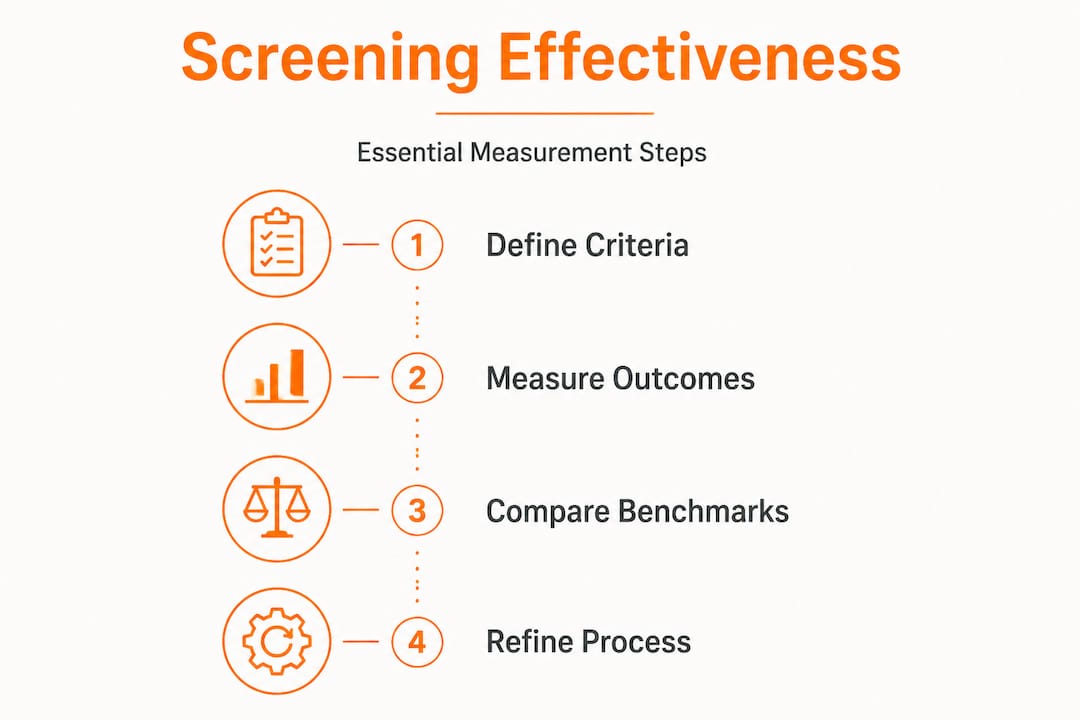

Here is a practical benchmarking sequence your team can follow:

- Define success criteria before you launch or evaluate any screening tool. What does a good hire look like at 90 days? At one year?

- Establish baseline data from your current process. What is your current screen-to-interview rate? What percentage of interviewed candidates receive offers?

- Apply rank-aware metrics to evaluate how well your screening tool orders candidates relative to your success criteria.

- Track downstream outcomes for every cohort that goes through the new process. Connect screen scores to interview performance and retention data.

- Review and recalibrate every quarter. Screening effectiveness drifts as roles, teams, and candidate pools evolve.

“Benchmarking is most valuable when it is connected to real hiring outcomes, not just internal model performance. The metric that matters is whether screened candidates actually succeed in the role.”

The process benchmarking approach most effective organizations use treats this as an ongoing cycle rather than a one-time audit. Your recruitment checklist should include a benchmarking review step at the start of every major hiring cycle.

Ethical and compliance considerations for AI screening

Benchmarking tells you whether your screening works. Compliance tells you whether it is lawful and ethical to operate. For HR teams using AI-powered screening, these are not separate concerns. They must be managed in parallel.

Compliance requirements in AI screening are expanding rapidly across jurisdictions. Organizations using AI screening need auditable documentation, candidate notices and consent where required, and ongoing monitoring and testing to manage adverse impact and disability accommodation risks.

The key compliance and ethics obligations your team must address include:

- Auditable documentation: Every screening decision should be traceable to specific criteria and scores. If a regulatory body or legal challenge requires you to explain why a candidate was rejected, you need a documented, reproducible answer.

- Candidate consent and notices: Many jurisdictions, including states like Illinois and New York City, require employers to notify candidates when AI tools are used in screening. Some require explicit consent before processing candidate data.

- Adverse impact monitoring: Regularly analyze screening outcomes by protected class characteristics. If your tool produces disparate rejection rates for any protected group, that is both an ethical problem and a legal liability.

- Disability accommodation: AI screening tools must not penalize candidates with disabilities who may need alternative assessment formats. Build accommodation pathways into your screening workflow from the start.

- Data retention limits: Candidate data collected during screening cannot be stored indefinitely. Establish clear retention and deletion policies aligned with applicable privacy law.

Improving screening quality is directly connected to ethical practice. When your process is structured, documented, and monitored, it is both more effective and more defensible. These goals reinforce each other.

Pro Tip: Designate a compliance owner for your AI screening process. This does not have to be a separate legal hire. It can be a senior HR leader who is accountable for documentation, monitoring, and annual compliance review. The critical thing is that ownership is explicit and not assumed to be someone else’s responsibility.

Our take: Why structure matters more than software

Many articles on AI-powered screening conclude with a tool recommendation. Here is a more candid perspective: the technology is the easy part.

The organizations that consistently make better hires are not necessarily the ones with the most sophisticated AI. They are the ones with the clearest criteria. They know what they are looking for before they open a requisition. They train their interviewers and reviewers to apply those criteria consistently. They measure outcomes and feed that data back into process refinement. The software helps them do this faster and at greater scale, but it does not generate the underlying clarity.

This matters because most HR teams shopping for AI screening tools are solving the wrong problem. They are trying to fix a volume problem or a speed problem with technology. But the real bottleneck is usually definitional. What does strong performance look like in this role? What signals correlate with long-term success? What criteria are we using consistently, and which ones are being applied differently by different people on the team?

When you attempt to automate an undefined or inconsistent process, you get automated inconsistency. The tool faithfully executes the flawed criteria you gave it, just faster and at greater scale.

Minimizing bias in screening requires addressing this root cause. Structured frameworks give automation context and accountability. They create the conditions under which AI tools can genuinely improve outcomes rather than simply accelerate existing patterns.

The practical wisdom here is straightforward: invest in your evaluation criteria before you invest in the tool. Run calibration sessions. Document your rubrics. Measure your outcomes. Then layer in automation to scale what is already working. That sequence produces results. Reversing it produces regret.

Enhance your screening process with AI-powered tools

If you’re ready to move from theory to action, here’s where you can start optimizing your screening process.

Testask is an AI-powered recruitment assessment platform built specifically for HR teams that want structured, consistent, and efficient candidate evaluation. The platform lets you generate tailored test tasks, review candidate submissions with AI-assisted scoring, and collaborate with hiring managers in a single workflow. Compliance-oriented features support documented, auditable evaluation at every stage. Whether you’re building your screening framework from scratch or scaling an existing process, Testask gives your team the tools to apply structured criteria faster and with greater confidence. Explore the streamlined hiring steps in the Testask blog to get started with actionable resources today.

Frequently asked questions

What is the main advantage of structured candidate screening?

Structured screening delivers consistent, fair evaluation by applying the same criteria and scoring rubrics to every candidate, which improves consistency and makes hiring decisions more defensible and comparable across your applicant pool.

How can AI screening lead to bias?

AI screening tools trained on historical data can encode past hiring patterns and introduce bias by compressing nuanced human judgment into automated rankings that systematically disadvantage certain candidate groups if left ungoverned.

What metrics are best for benchmarking screening quality?

Rank-aware metrics such as precision@k and NDCG provide the most accurate measurement of screening tool performance when paired with downstream hiring outcomes like offer rates and 90-day retention.

What compliance steps must HR teams take when using AI screening?

HR teams must maintain auditable documentation, provide candidate notices and consent where legally required, and conduct ongoing monitoring to identify and address adverse impact and disability accommodation issues.