Why improve candidate screening? Efficiency, quality, AI

Why improve candidate screening? Efficiency, quality, AI

Screening hundreds of applications per role used to be a manageable task. Today, it’s one of the biggest drains on recruiter time and hiring quality. Applications per job have climbed 24% to an average of 257.5, and most HR teams are still using manual methods that weren’t built for this volume. The good news is that AI-powered tools are changing the equation fast, cutting time-to-screen and improving hire quality simultaneously. This article breaks down why upgrading your screening process matters, how AI fits in, what the mechanics look like in practice, and which pitfalls to avoid along the way.

Table of Contents

- The case for improving candidate screening

- How AI-powered screening transforms hiring outcomes

- Inside AI screening: Mechanisms, frameworks, and best practices

- Risks, challenges, and getting AI screening right

- Why the smartest HR teams blend tech with human judgment

- Streamline your screening with next-gen AI solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Screening bottleneck | High application volume overwhelms HR, making improved screening a business necessity. |

| AI efficiency gains | AI tools cut time-to-hire and cost-per-hire significantly while improving hire quality. |

| Bias and compliance | Proper AI screening with human oversight enhances fairness and meets regulatory standards. |

| Critical pitfalls | Risks like false negatives and hidden AI resumes require ongoing monitoring and expertise. |

The case for improving candidate screening

Screening has quietly become the top bottleneck in the hiring funnel. It’s no longer sourcing or interviewing that slows teams down. It’s the sheer volume of applications landing in the queue every day. When a single role attracts 250-plus candidates, even a two-minute review per resume adds up to over eight hours of work before a single interview is scheduled.

The numbers tell a clear story. Time-to-screen dropped to 7.2 days (13% faster year over year), yet the average role now receives 257.5 applications, a 24% increase. Meanwhile, 72% of companies report facing talent scarcity, meaning the pressure to find the right person faster has never been higher.

| Metric | 2025 Benchmark | Year-over-year change |

|---|---|---|

| Applications per role | 257.5 | +24% |

| Time-to-screen | 7.2 days | 13% faster |

| Companies facing talent scarcity | 72% | Ongoing |

The business costs of poor screening go well beyond slow hiring. Here’s what’s actually at stake:

- Recruiter burnout: Manual review at scale is unsustainable and leads to high turnover on your own HR team.

- Missed talent: Qualified candidates get buried under volume and never surface.

- Slow hiring cycles: Delayed decisions push top candidates toward competitors.

- Inconsistent evaluation: Without structured criteria, two reviewers score the same resume differently.

- Damaged candidate experience: Employers hiring faster are still struggling with candidate engagement and experience, signaling that speed alone isn’t enough.

“The volume problem isn’t going away. Organizations that don’t systematize screening will keep losing ground on both speed and quality.”

Improving screening isn’t just about efficiency. It’s about protecting the integrity of your entire hiring pipeline. When you fix screening, everything downstream, from interviews to onboarding, gets better too. AI candidate screening efficiency is now a competitive advantage, not a nice-to-have.

How AI-powered screening transforms hiring outcomes

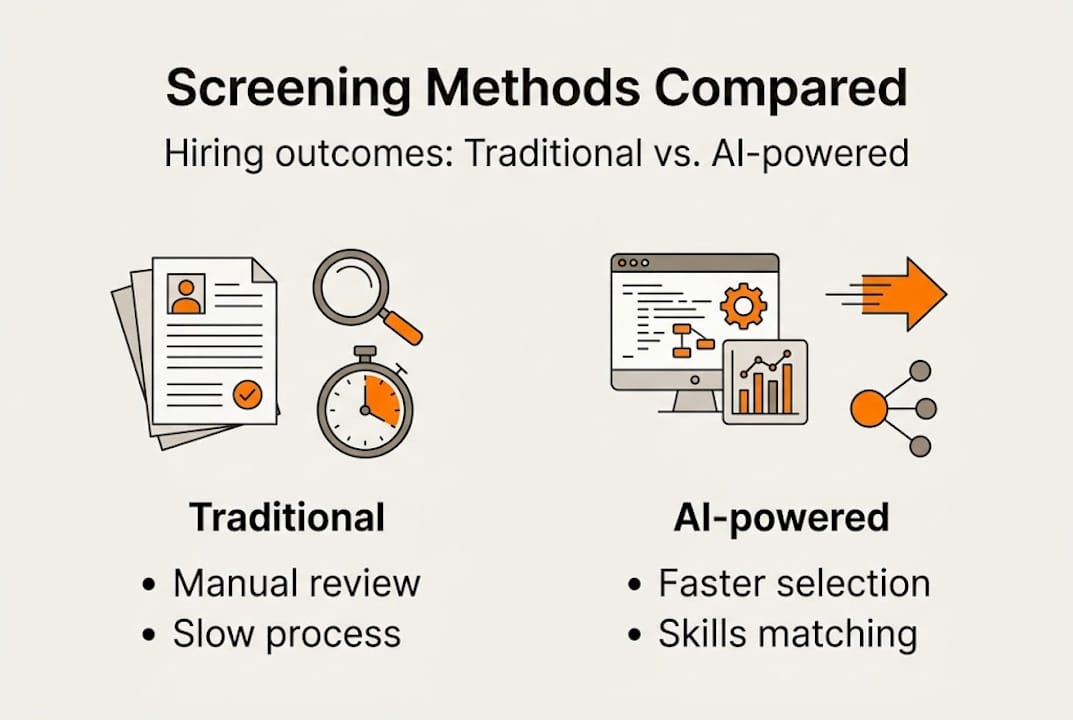

Once the need for improvement is clear, the next step is understanding how modern AI tools actually solve these challenges. The shift from manual to AI-powered screening isn’t just faster. It’s fundamentally more consistent and more defensible.

AI screening tools parse resumes using natural language processing (NLP), match candidates against structured skills frameworks, and apply consistent scoring rubrics across every application. The result is a ranked shortlist that reflects actual job requirements, not a recruiter’s memory of what the hiring manager mentioned in a meeting.

The performance data is striking. AI-led interviews achieve a 53% success rate in subsequent human interviews, compared to just 29% with traditional methods. That’s nearly double the conversion rate. The same analysis points to an 87% cost reduction and 24 to 30% higher assessment consistency.

Comparison: Traditional vs. AI-powered screening

| Factor | Traditional screening | AI-powered screening |

|---|---|---|

| Speed | Days to weeks | Hours to 1 day |

| Consistency | Varies by reviewer | Standardized rubrics |

| Bias risk | High (unconscious) | Lower with proper design |

| Cost per screen | High | Up to 87% lower |

| Quality-of-hire | 29% interview success | 53% interview success |

Beyond speed and cost, AI unlocks capabilities that manual review simply can’t match:

- Skills ontology matching: AI maps candidate skills to structured frameworks, catching qualified candidates who don’t use exact keywords.

- Candidate prioritization: Ranked shortlists let recruiters focus attention where it matters most.

- Transparency in scoring: Every decision is logged, making it easier to audit and defend.

- Bias reduction: AI improves skills-based screening and reduces bias when the system is designed with proper guardrails and human oversight.

Pro Tip: Don’t treat AI as a replacement for human judgment. Pair automated scoring with a structured human review at the shortlist stage. Teams that use AI alongside human decisions consistently outperform those that go fully automated.

Inside AI screening: Mechanisms, frameworks, and best practices

Knowing the benefits, HR leaders must understand how to implement AI screening safely and effectively. The mechanics matter because a poorly configured system can create more problems than it solves.

The typical AI screening workflow moves through four stages. First, NLP parses incoming resumes to extract skills, experience, and credentials. Second, a skills ontology matches extracted data against a structured job requirements framework using vector scoring. Third, candidates are ranked by fit score. Fourth, human reviewers handle edge cases, borderline candidates, and any applications that fall outside the model’s confidence threshold.

Rubric design is where most implementations succeed or fail. Structured rubrics should allocate 60 to 70% of scoring weight to must-have criteria and 20 to 30% to nice-to-haves. Setting clear precision and recall targets before launch prevents the system from being either too strict (missing good candidates) or too loose (flooding reviewers with weak matches).

Here’s a step-by-step setup process for fair and effective AI screening:

- Define job requirements precisely. Work with hiring managers to separate must-haves from preferences before configuring the model.

- Build a structured rubric. Assign scoring weights to each criterion and document the rationale.

- Run a calibration test. Score a batch of past applications manually and compare results to the AI output. Adjust thresholds as needed.

- Set a human review checkpoint. Decide which score ranges trigger automatic advancement, human review, or rejection.

- Monitor outputs regularly. Track pass rates by demographic group and flag anomalies for review.

- Iterate based on hire quality. Feed performance data from hired candidates back into the model to improve future scoring.

“The best AI screening implementations treat the model as a first-pass filter, not a final decision-maker. Human judgment at the shortlist stage is non-negotiable.”

Pro Tip: Stay current on compliance requirements. EEOC guidelines and local regulations around automated hiring tools are evolving quickly. Build AI candidate screening documentation into your process from day one so you’re audit-ready at all times.

Risks, challenges, and getting AI screening right

Even the best-designed AI systems face real-world complications. Understanding these risks before you deploy is what separates teams that benefit from AI from those that create new problems with it.

The most common screening pitfalls include:

- False negatives: Strong candidates rejected because their resume formatting or vocabulary didn’t match the model’s training data.

- Keyword rigidity: Systems that rely on exact-match keywords rather than semantic understanding miss qualified applicants.

- Undetected AI-generated resumes: 75% of recruiters can’t detect AI-generated resumes, which inflates application volume and undermines screening accuracy.

- Bias amplification: If training data reflects historical hiring patterns, the model may perpetuate the same biases at scale.

- Legal exposure: Pre-screening lawsuits are increasing, particularly where automated tools make adverse decisions without documented justification.

The AI-generated resume problem deserves special attention. As candidates use AI tools to tailor applications at scale, the volume problem compounds. A single job posting can attract hundreds of AI-polished resumes that look qualified on the surface but don’t reflect actual skills. Skill-based assessments and structured test tasks are the most reliable way to verify what a resume claims.

“Transparency and regulatory compliance aren’t optional features. They’re the foundation that makes AI screening legally and ethically defensible.”

Actionable steps to mitigate these risks:

- Audit your model’s pass rates by demographic group every quarter.

- Add a practical skills assessment layer to validate resume claims.

- Document every automated decision with a clear rationale.

- Train recruiters to recognize AI-generated content patterns.

- Review your screening process against AI in recruitment regulatory guidance annually.

Why the smartest HR teams blend tech with human judgment

Here’s a view that doesn’t get enough airtime: more automation does not automatically mean better screening. Many organizations implement AI tools and then step back entirely, assuming the technology handles everything. That’s where outcomes deteriorate.

The highest-performing HR teams we observe don’t just deploy AI and monitor dashboards. They treat AI as a structured collaborator. They run regular calibration sessions where recruiters score a set of live applications manually and compare results to the model. Discrepancies become training data for both the AI and the team.

They also build structured feedback loops. When a hired candidate underperforms at 90 days, that signal goes back into the screening rubric. When a rejected candidate gets hired by a competitor and excels, that’s a false negative worth investigating. This continuous improvement cycle is what separates teams that achieve both strong DEI outcomes and fast hiring rates from those that optimize for one at the expense of the other.

The uncomfortable truth is that AI screening tools are only as good as the humans who configure, monitor, and refine them. Relying on best practices for AI and human decisions isn’t a limitation. It’s the actual strategy. Teams that understand this build screening processes that get measurably better over time, rather than drifting toward bias or legal risk.

Streamline your screening with next-gen AI solutions

If the practices outlined here resonate, Testask gives you the infrastructure to put them into action. The platform lets you generate tailored test tasks for any role, evaluate candidate submissions with AI-assisted analysis, and collaborate with hiring managers on structured reviews, all in one place.

Testask is purpose-built for HR teams that want to move faster without sacrificing quality or fairness. You can automate the repetitive parts of screening, maintain human oversight where it counts, and build a defensible, consistent process that scales with your hiring volume. Whether you’re screening 50 candidates or 5,000, Testask helps your team focus on the decisions that matter most. Explore the platform and see how it fits your workflow.

Frequently asked questions

What are the biggest benefits of improving candidate screening?

Improving candidate screening delivers faster hiring cycles, better quality-of-hire, and lower costs. 89% of users report efficiency and time gains, with up to a 30% reduction in cost-per-hire when AI tools are applied with best practices.

How does AI screening reduce bias in hiring?

AI screening applies consistent rubrics and skills-based matching across every application, removing the inconsistency of manual review. Bias decreases when systems are designed with proper guardrails and monitored regularly by human reviewers.

What risks should HR watch for with AI-powered screening?

The primary risks are false negatives, undetected AI-generated resumes, and bias or non-compliance when systems aren’t actively monitored. 75% of recruiters currently can’t identify AI-generated resumes, making skills assessments a critical verification layer.

Do AI tools completely replace human recruiters in screening?

No. Human oversight remains essential for edge cases, compliance, and candidate experience. AI needs governance and a human touch to deliver results that are both effective and legally defensible.