AI candidate screening: Methods, pitfalls, and best practices

AI candidate screening: Methods, pitfalls, and best practices

Most hiring managers assume AI screening means faster resume sorting. That framing undersells the technology by a wide margin. Modern AI screening systems apply machine learning, natural language processing, and semantic matching to evaluate candidates across dozens of dimensions simultaneously, ranking them against structured job rubrics and flagging gaps that a human reviewer might miss after reading a hundred applications in a single afternoon. The gap between what AI screening actually does and what most HR teams think it does is where costly missteps happen. This article covers the real mechanics, the methodologies driving ranking decisions, the bias risks you cannot ignore, and the practical steps to implement AI screening with confidence.

Table of Contents

- How AI parses and ranks candidates

- Skills-first methodologies and ranking frameworks

- Bias risks and mitigation strategies

- Ensuring ongoing quality and practical implementation

- The uncomfortable truth: AI is only as fair as its frameworks

- Transform your screening process with AI-powered tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI screening workflow | AI systems parse resumes with machine learning and NLP, then rank candidates based on skills and job rubrics. |

| Skills-first ranking | Prioritizing must-have skills allows for objective comparisons and greater clarity in candidate selection. |

| Bias mitigation required | Active bias monitoring and mitigation strategies are essential to safeguard fairness and compliance. |

| Benchmark monitoring | HR teams should track AI performance over time, especially for standardized roles, to maintain hiring quality. |

| Human oversight matters | Relying fully on AI screening risks mirrored biases; expert review and iteration are critical for ethical hiring. |

How AI parses and ranks candidates

Having introduced AI’s broader impact, let’s break down how it actually screens candidates step by step.

AI screening is not a single action. It is a sequence of tightly connected processes, each building on the last. The system ingests raw resume data, normalizes inconsistent formatting, extracts skills and experience, maps them to a job rubric, assigns scores, and produces a ranked output. Each stage has meaningful implications for who rises to the top of your shortlist.

AI screening primarily uses machine learning, NLP, and semantic matching to parse resumes, extract skills, score against job rubrics, and rank candidates, producing outputs that go far beyond keyword matching.

Here is what the process looks like from intake to ranking:

- Data ingestion: The system accepts resumes in multiple formats, including PDF, DOCX, and plain text, then normalizes them into a structured schema.

- Entity extraction: NLP models identify job titles, tenure, educational credentials, certifications, and technical skills. Modern systems use resume parsing methods that recognize context, not just keywords.

- Semantic matching: Rather than searching for exact keyword matches, embedding models map both the resume and the job description into a shared vector space. Candidates whose profiles sit closest to the target role surface higher.

- Rubric scoring: Each extracted attribute is scored against weighted criteria defined in the job rubric. Must-have competencies carry more weight than nice-to-haves.

- Ranking and explainability: Candidates receive a composite score. Most enterprise-grade tools also generate explanations showing which criteria drove each score, giving your team a defensible basis for shortlisting decisions.

The table below shows a typical AI screening workflow with the tools and outputs at each stage.

| Stage | Process | Output |

|---|---|---|

| 1. Ingestion | Multi-format resume intake | Normalized candidate record |

| 2. Extraction | NLP entity and skill extraction | Structured skill and experience data |

| 3. Matching | Semantic embedding comparison | Similarity scores per criterion |

| 4. Scoring | Rubric-weighted attribute scoring | Composite candidate score |

| 5. Ranking | Score aggregation and sort | Ranked shortlist with explanations |

Using this streamlined screening guide as a reference, HR teams can map their current workflow against each stage and identify where manual bottlenecks are slowing down the process. The explainability layer at stage five is particularly important, because it allows hiring managers to audit why a candidate ranked where they did, rather than treating the output as a black box.

Skills-first methodologies and ranking frameworks

Understanding the basic mechanics, we can now look at the advanced methodologies that drive candidate ranking.

The way you weight skills inside a rubric has a bigger effect on ranking outcomes than most HR teams realize. Shift a must-have criterion by 10 percentage points and your entire shortlist can reorder. This is not a flaw in the system; it is exactly how it is supposed to work. The methodology you choose has direct consequences for who gets through.

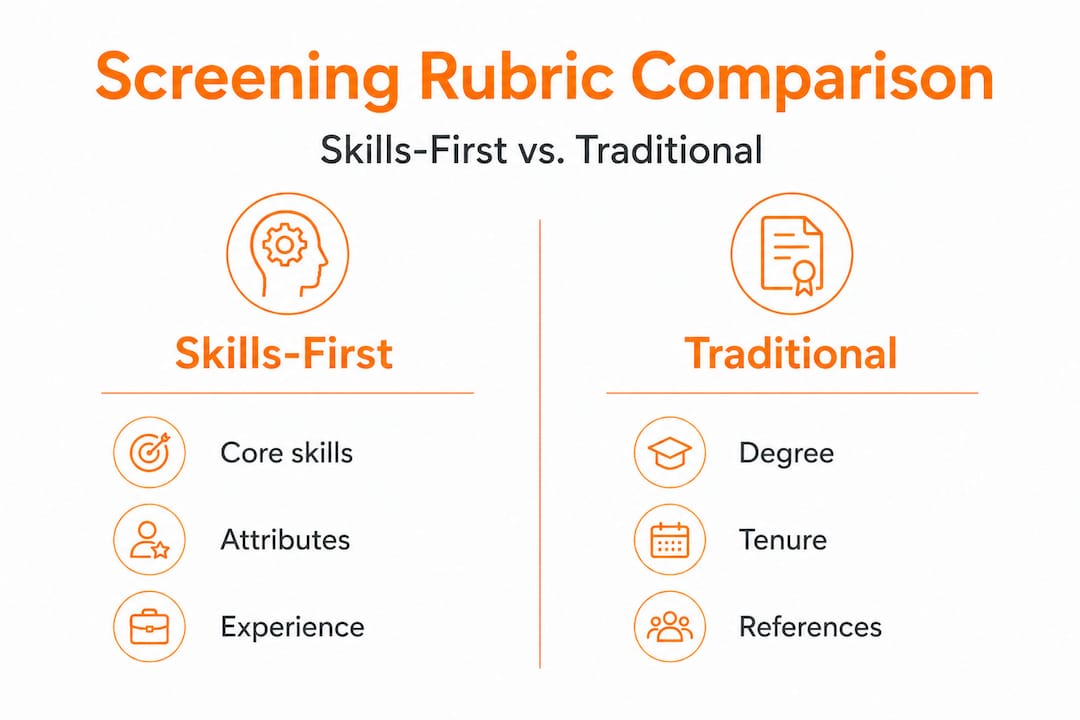

The leading approaches use skills-first rubrics that assign explicit percentage weights across three tiers. Must-have competencies typically carry 60 to 70 percent weight, nice-to-have skills account for 20 to 30 percent, and potential indicators such as learning agility or adjacent experience fill the remaining balance. This structure ensures that candidates who miss a core requirement do not mask the gap with an impressive credential elsewhere.

Embedding-based matching takes this further. Systems built on transformer models like BERT or RoBERTa (Bidirectional Encoder Representations from Transformers) generate dense vector representations of both resumes and job descriptions. Tools like Resume2Vec apply this approach specifically to career data, enabling the model to recognize that “managed cross-functional teams” and “led interdepartmental projects” represent similar experience, even though the words share no overlap. The rubric’s impact on final ranking is magnified when embedding models are doing the matching, because nuanced equivalences get captured that simple keyword logic would miss.

Here is a practical framework for setting up a skills-first ranking system:

- Define must-have criteria with explicit performance benchmarks, not vague descriptors. “Five or more years of B2B sales experience with a documented close rate above 30 percent” is measurable. “Strong communicator” is not.

- Assign percentage weights to each criterion before the system processes a single resume. Doing this after you have seen results introduces confirmation bias into the rubric design.

- Configure semantic matching using a job description written for the AI, not the candidate. Dense, specific language produces better vector representations than polished marketing copy.

- Run a calibration pass on a sample of known strong and weak candidates before going live. Check whether the rankings align with your human judgment.

- Review and adjust weights after each hiring cycle based on the actual on-the-job performance of candidates who were hired.

Pro Tip: Use conversational AI interviews as a validation layer after initial screening. These asynchronous video or text-based interviews ask candidates structured questions tied directly to the must-have criteria, giving you behavioral evidence that confirms or challenges what the resume data suggests. This approach to AI talent matching reduces the risk of relying exclusively on document-based signals.

| Criterion | Skills-first rubric | Traditional criteria |

|---|---|---|

| Core technical skills | 60 to 70% of total score | Listed qualifications, equal weight |

| Soft skills validation | Structured AI interview | Subjective resume impression |

| Experience weighting | Semantic similarity to role | Job title and years only |

| Credential recognition | Context-aware extraction | Keyword match |

| Audit trail | Explainable score per criterion | Reviewer notes (inconsistent) |

The comparison above makes the structural advantage clear. Skills-first systems force you to define what good looks like before you start screening, which makes every subsequent decision more defensible and more consistent.

Bias risks and mitigation strategies

With methodologies in mind, let’s address the biggest challenge: bias and compliance in AI screening.

AI does not eliminate bias. It encodes it differently. This distinction matters enormously, because the confidence that comes with automated scoring can make bias harder to detect and easier to scale.

Research on large language model screening behavior reveals ordinal bias as a specific risk: LLMs tend to favor the first resume they process in a batch, independent of quality. In a high-volume hiring scenario, this means the order in which applications arrive influences who gets selected, a factor completely unrelated to job-relevant skills. The same research found that candidates with names associated with Black or Hispanic backgrounds were required to present higher-cost credentials to clear the same selection threshold as candidates with majority-group names.

Historical training data introduces a separate risk. When an AI system learns from past hiring decisions made by humans who carried their own biases, the model replicates those patterns at scale. If your company historically hired engineers from a narrow set of universities, the model learns to weight those institutions higher, filtering out equally qualified candidates from non-traditional educational paths. The EEOC holds employers liable for discriminatory outcomes caused by AI tools, regardless of whether those outcomes were intentional, which makes this a compliance issue, not just an ethical one.

Effective mitigation requires a layered approach. Consider the following practices:

- Anonymize application data during the initial screening pass. Remove names, photos, graduation years, and location indicators that can serve as proxies for protected characteristics.

- Audit for adverse impact regularly by analyzing selection rates across demographic groups. If one group is being screened out at a disproportionate rate, that is a signal the rubric or the training data needs review. Learn more about human-in-the-loop bias mitigation approaches that keep reviewers engaged at critical decision points.

- Randomize application order to counteract ordinal bias. Do not let queue position influence who the AI evaluates most favorably.

- Maintain human oversight at every shortlisting decision. AI scoring informs the decision; it does not make it. A hiring manager who reviews assessment best practices before deploying a new tool will know where human judgment needs to remain in the loop.

- Retrain models periodically using updated data that reflects your current diversity and performance goals, not historical hiring patterns you are actively trying to move away from.

Pro Tip: Set a calendar reminder to conduct an AI bias overview and adverse impact audit every quarter, not just at implementation. Model performance drifts over time as job requirements and candidate pools evolve. Quarterly audits catch drift before it compounds.

Ensuring ongoing quality and practical implementation

After understanding bias risks, let’s get practical: how HR teams can implement AI screening confidently.

AI screening works best in contexts where job requirements are consistent and measurable. Standardized roles with clear skill profiles, high application volumes, and well-defined rubrics are the environments where automation delivers the most value with the least risk. Roles requiring complex judgment, rare competencies, or significant cultural context are better served by AI as a support tool rather than a primary filter.

According to HR recruitment guidance, AI screening works best for standardized roles, complements structured interviews effectively, and requires active monitoring to sustain benchmark quality over time. Benchmark drift, where the model’s performance gradually degrades as the talent pool or role requirements shift, is one of the most common reasons AI screening programs underperform after their initial deployment.

Follow these steps to implement AI screening in a way that holds up over time:

- Audit your current screening process before introducing AI. Document where time is being lost, where inconsistency enters decisions, and which roles generate the highest application volumes.

- Select AI screening tools that provide explainable outputs, not just ranked lists. You need to understand why a candidate scored where they did in order to defend that decision if challenged.

- Pilot on a single role type before rolling out broadly. Choose a role with a large, consistent applicant pool and a clear performance benchmark.

- Integrate with structured interviews so the AI shortlist feeds directly into a standardized interview process. Refer to your recruitment checklist to ensure every step is coordinated.

- Track post-hire performance and connect it back to the AI’s screening scores. If high-scoring candidates are underperforming, your rubric needs recalibration.

Common pitfalls and how to avoid them:

- Over-relying on AI for final decisions: Keep humans in the loop at the shortlisting and offer stages.

- Setting weights without testing: Calibrate rubric weights against known outcomes before going live.

- Ignoring demographic data: Running screening without monitoring selection rates across groups is a compliance risk.

- Neglecting model updates: A rubric that worked in 2024 may not reflect the skills landscape in 2026. Update your talent acquisition strategies regularly to account for shifting role requirements.

- Treating AI screening as set-and-forget: Ongoing quality requires ongoing attention. Schedule regular reviews of model outputs against business results.

The uncomfortable truth: AI is only as fair as its frameworks

Here is a perspective that most vendor guides do not include. The premise that AI removes human bias from hiring rests on a flawed assumption: that the frameworks feeding the AI are themselves neutral. They are not.

Every rubric reflects a decision about what matters. Every training dataset reflects who got hired in the past, by humans with their own assumptions about what a strong candidate looks like. The AI does not introduce neutrality into these inputs. It amplifies and scales whatever values are already encoded in them. This means that deploying AI screening without interrogating your rubric design and your training data is not a step toward fairness. It is a step toward faster, higher-volume replication of existing patterns.

The more uncomfortable truth is that bias can be easier to ignore when it comes wrapped in a confidence score. A human reviewer who passes over a qualified candidate might reflect on that decision. An algorithm that deprioritizes the same candidate generates no such reflection. This is why AI talent matching tools need to be treated as evolving partners that require oversight, not as objective arbiters of candidate quality.

The teams getting the most from AI screening are not the ones that automate the most. They are the ones that use AI to surface signal quickly, then apply rigorous human judgment at the decision points that matter. They treat their rubrics as hypotheses to be tested against outcomes, not truths to be enforced. That mindset, more than any specific tool, is what separates responsible AI screening from the alternative.

Transform your screening process with AI-powered tools

Putting AI screening methodology into practice requires more than the right framework. You need a platform built for the realities of modern recruitment.

Testask is an AI-powered assessment platform that gives HR teams and hiring managers the tools to create tailored test tasks, evaluate candidate submissions with AI-assisted analysis, and collaborate on reviews in one centralized workflow. Rather than replacing human judgment, Testask is designed to sharpen it. You set the rubric; the AI accelerates the evaluation. From skills-based task design to structured candidate scoring, every feature is built to help mid-sized companies screen faster and hire with greater confidence. Explore the AI assessment subscription options to find the plan that fits your team’s hiring volume and workflow.

Frequently asked questions

What is the main advantage of AI in candidate screening?

AI quickly evaluates large pools of resumes using skill-focused rubrics and semantic matching, streamlining decision-making and reducing manual effort. By ranking candidates against structured job criteria, it ensures that shortlisting decisions are based on consistent, measurable standards rather than reviewer fatigue or intuition.

How does AI bias impact diversity in hiring?

AI bias can lead to unequal thresholds for candidates based on name or race, risking exclusion of non-traditional or minority applicants unless actively mitigated. Research shows that ordinal bias in LLMs and name-based proxies can disadvantage qualified candidates before any human reviewer sees their application.

Are employers legally responsible for AI-related discrimination?

Yes, the EEOC holds employers liable for discriminatory impacts caused by AI screening methods, requiring active monitoring and mitigation regardless of whether the bias was intentional or introduced by the vendor.

What practical steps can HR take to reduce AI bias?

Integrate blind reviews, conduct regular adverse impact audits, and maintain human oversight throughout the screening workflow. The SHRM guidance specifically recommends combining skills-focused rubrics with structured human review to catch and correct disparate impact before it becomes a compliance issue.

Recommended

- Why improve candidate screening? Efficiency, quality, AI | Testask Blog | testask

- Candidate Screening Process Guide: Streamlined Hiring Steps | Testask Blog | testask

- Employment assessment best practices that elevate hiring results | Testask Blog | testask

- Streamline candidate evaluation: proven steps for better hiring | Testask Blog | testask