Recruitment assessment steps: your guide to bias-free hiring

Recruitment assessment steps: your guide to bias-free hiring

Hiring the wrong person is expensive. Research consistently shows that a bad hire can cost anywhere from 30% to 150% of that person’s annual salary, and the damage extends well beyond budget. When your recruitment assessment process relies on gut feel, inconsistent interviews, or poorly defined criteria, those risks compound quickly. The good news is that a structured, step-by-step approach to recruitment assessment reduces those risks substantially. This guide walks HR leaders through every stage of building a fair, efficient, and defensible assessment process, from preparation to continuous improvement, with clear guidance on integrating AI tools responsibly.

Table of Contents

- Understanding recruitment assessment: what and why

- Preparation: defining roles, competencies, and requirements

- Step-by-step process: conducting fair and effective assessments

- Verification and continuous improvement: ensuring relevance and fairness

- A nuanced perspective: why structure alone isn’t enough in modern recruitment

- Transform your hiring with AI-powered recruitment assessments

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Structure reduces bias | Using the same assessment methods for all candidates cuts bias and boosts predictability in hiring results. |

| Preparation is critical | Defining roles and competencies beforehand ensures all hiring steps align with business needs. |

| AI streamlines but must be audited | AI can make assessments faster but needs careful monitoring to avoid hidden biases. |

| Continuous review sustains fairness | Regularly auditing and updating processes keeps recruitment assessments legally compliant and equitable. |

| No one-size-fits-all | Balancing structure, technology, and human insight leads to the most effective hiring decisions. |

Understanding recruitment assessment: what and why

Recruitment assessment is the systematic process of evaluating candidates against defined criteria to determine their suitability for a role. It includes everything from resume screening and structured interviews to skills tests, work sample tasks, and reference checks. The critical word is systematic. Without a consistent system, evaluators introduce personal biases, make decisions on incomplete information, and often reach contradictory conclusions about the same candidate.

The evidence for structured methods is strong. Structured interviews use the same questions for all candidates, scored on predefined rubrics (for example, a 1 to 5 scale with behavioral anchors), reducing bias and improving consistency. Unstructured approaches, by contrast, vary by interviewer and by day, making comparisons between candidates unreliable. Reviewing assessment best practices before you build your process helps you avoid the most common structural mistakes.

Here is a quick comparison of what structured versus unstructured assessments typically deliver:

| Dimension | Structured assessment | Unstructured assessment |

|---|---|---|

| Consistency | High: same process for all | Low: varies by evaluator |

| Predictive validity | Significantly higher | Lower, less reliable |

| Bias risk | Reduced through rubrics | Elevated due to subjectivity |

| Legal defensibility | Strong | Weak |

| Candidate experience | Equitable and clear | Inconsistent, often confusing |

A well-designed recruitment assessment process targets three core metrics:

- Consistency: Every candidate goes through the same evaluation steps under comparable conditions.

- Validity: The assessment actually measures what predicts job performance, not peripheral traits.

- Bias reduction: Structured scoring minimizes the influence of factors irrelevant to the role.

Following recruitment checklist strategies from the outset keeps your process organized and auditable. Getting these foundations right before you open your first job posting saves time, reduces legal exposure, and produces better hires.

Preparation: defining roles, competencies, and requirements

Before you assess anyone, you need to know exactly what you are assessing for. This sounds obvious, but many organizations skip or rush this step, then wonder why their assessments feel arbitrary. The preparation phase is where you set every other step up for success.

Start with a thorough job analysis. Talk to current high performers in the role, their managers, and cross-functional stakeholders. Identify the specific tasks, decisions, and challenges the role involves. Then translate those observations into measurable competencies: communication, technical problem solving, project prioritization, stakeholder management. Vague traits like “culture fit” or “attitude” are not measurable and introduce bias. Concrete competencies with behavioral indicators are.

Once competencies are defined, follow these steps to finalize your preparation:

- Write a detailed job description aligned to the competencies you identified. Every requirement should trace back to an actual job demand.

- Build a scoring rubric for each competency. Define what a 1, 3, and 5 look like behaviorally, so every evaluator is calibrated to the same standard.

- Design assessment tools matched to the competencies. A technical role might need a work sample task. A client-facing role might need a structured role play. Choose tools with evidence behind them.

- Select your evaluation team and train them on the rubric before any assessments begin. Even the best rubric fails when evaluators apply it differently.

- Document everything. Create a paper trail of your criteria, tools, and scoring standards for compliance and review purposes.

When comparing your tooling options, consider this:

| Tool type | Speed | Bias risk | Cost | Best for |

|---|---|---|---|---|

| Manual structured interview | Moderate | Moderate (managed by rubric) | Low | Soft skills, judgment |

| Work sample / test task | Moderate | Low | Medium | Technical and role-specific skills |

| AI-assisted scoring | High | Low to medium (with audits) | Medium to high | High-volume screening |

| Unstructured interview | Fast | High | Low | No recommended use case |

Proven evaluation steps show that investing time in clear rubrics during preparation pays off throughout the entire hiring cycle. Calibrated evaluators make decisions faster, disagree less, and produce outcomes that hold up under scrutiny. Reviewing your screening process guide at this stage also helps you sequence each step efficiently.

Pro Tip: Do not try to assess every competency in every round. Assign specific competencies to specific stages. For example, screen for technical skills early, and reserve stakeholder management assessment for final rounds. This keeps each interaction focused and reduces evaluator fatigue.

AI tools cautiously integrated with bias audits can deliver meaningful efficiency gains at this stage, especially in high-volume hiring. But the foundation must be human-defined competencies and rubrics. AI works best when it scores against criteria you have already validated.

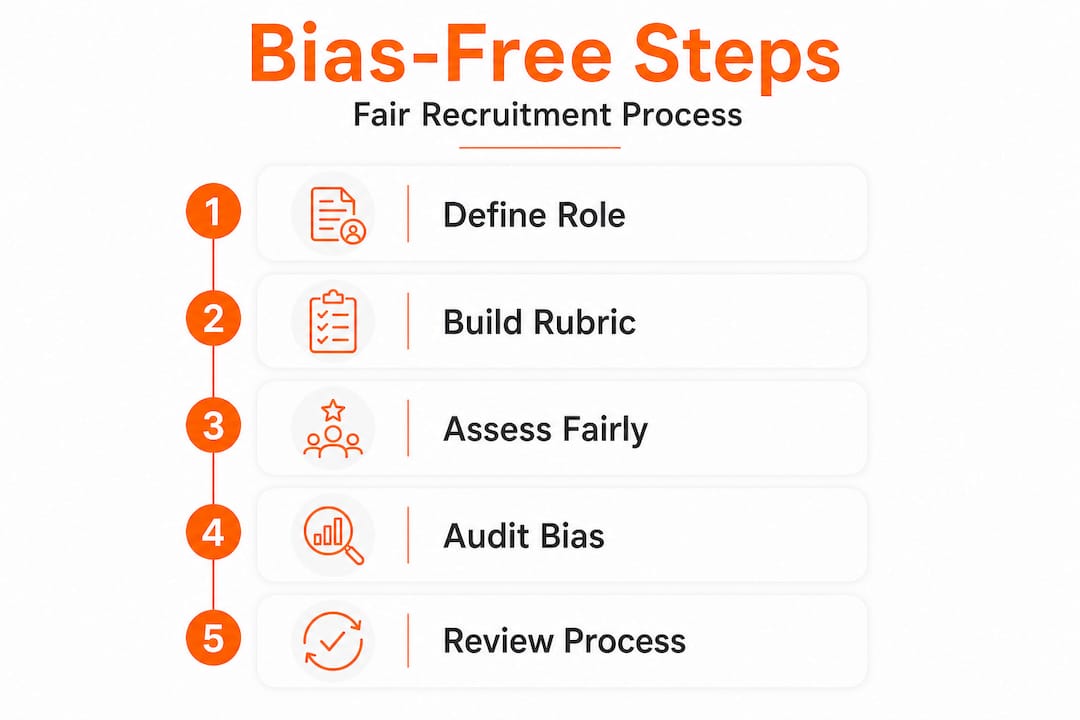

Step-by-step process: conducting fair and effective assessments

With your preparation complete, you are ready to run the actual assessment. The sequence below applies whether you are hiring for one role or fifty.

- Publish your job posting with clear competency-based language. Candidates who self-select based on accurate information are better fits from day one.

- Apply a structured resume screen. Use a defined scoring template to review applications against your stated requirements. Avoid filtering by factors unrelated to the role.

- Send an initial skills assessment or test task. Work samples are among the best predictors of on-the-job performance. Keep them scoped and relevant, no more than 60 to 90 minutes of candidate effort.

- Conduct structured first-round interviews. Use identical questions for all candidates. Score each response on your rubric immediately after the interview, not days later.

- Calibrate scores across evaluators before advancing candidates. If two interviewers score the same response differently, discuss the gap and reconcile it against the rubric definition.

- Run deeper assessments in later rounds targeting the competencies not yet evaluated. This might include case study exercises, panel discussions, or a second structured interview with a different evaluator.

- Compile and compare scorecards across all evaluation stages. Make advancement decisions based on documented scores, not recollections or impressions.

- Complete reference checks using structured questions tied to the same competencies you assessed directly.

Key statistic: Structured interviews improve prediction accuracy by 34% over unstructured interviews, making them one of the highest-ROI changes any HR team can implement.

A few critical checks keep this process fair throughout:

- Use identical question scripts across all candidates for each role.

- Record scores immediately after each evaluation, not after a panel discussion.

- Monitor pass rates by demographic group at each stage and flag unusual patterns.

- Use AI candidate screening tools that provide explainable scoring, meaning you can see why a candidate received a given score, not just what that score was.

The case for AI recruitment advantages is real, but only when the technology is applied with safeguards. AI in assessments speeds screening but risks bias from training data. The recommended approach: require bias audits, use explainable scoring, apply the EEOC four-fifths rule as a check, and keep human reviewers in the loop for final decisions.

Pro Tip: Build a shared evaluation workspace so every interviewer scores independently before they see anyone else’s ratings. Group discussion before scoring dramatically increases conformity bias, where evaluators defer to the most senior person in the room rather than trusting their own rubric-based analysis.

Verification and continuous improvement: ensuring relevance and fairness

Running a structured assessment once is a good start. Running it consistently and improving it over time is what separates high-performing talent functions from average ones. The verification phase closes the loop between assessment design and real-world outcomes.

Start with outcome audits. After each hiring cycle, track:

| Metric | What it tells you |

|---|---|

| Offer acceptance rate | Whether assessments create a positive candidate experience |

| 90-day retention rate | Whether hired candidates are performing as expected |

| Pass rate by demographic group | Whether assessments are screening out protected groups at higher rates |

| Evaluator score variance | Whether calibration training is working |

| Time to hire per stage | Where bottlenecks are forming |

The EEOC four-fifths rule is a practical starting point for bias auditing. It states that if the selection rate for any group is less than 80% of the rate for the highest-selected group, that is evidence of adverse impact and warrants investigation. Apply this check at every stage of your funnel, not just the final hiring decision.

Use these steps to run an effective review cycle:

- Gather assessment outcome data at least 90 days post-hire to allow for performance signals to emerge.

- Apply the four-fifths rule to pass rates at each assessment stage, segmented by relevant demographic groups.

- Review evaluator variance. If one interviewer consistently scores far above or below others, investigate whether recalibration is needed.

- Solicit candidate feedback. Candidates who did not receive offers can provide candid input on whether the process felt fair and transparent.

- Update rubrics and question banks annually or after any major role change, business pivot, or regulatory update.

“Organizations using AI in assessments must apply bias audits and the EEOC four-fifths rule consistently, since AI screening tools risk bias from their training data even when they appear neutral on the surface.”

Documentation matters here. Keep records of your scoring rubrics, calibration sessions, audit results, and any changes you make to the process. If your hiring decisions are ever challenged legally, documented evidence of a structured, audited process is your strongest defense. Review resources on improving candidate screening and screening efficiency and quality to benchmark your approach against current practices. Continuous improvement is not optional in a regulatory environment that is increasingly scrutinizing algorithmic hiring tools.

A nuanced perspective: why structure alone isn’t enough in modern recruitment

Here is something most process guides won’t tell you: structure can become a crutch. Many HR teams implement structured interviews, generate rubrics, and then treat the process as if it runs on autopilot. It does not.

Structure is foundational, but it is not a substitute for informed human judgment. A rubric tells you how to score a response. It does not tell you whether the question you wrote actually predicts performance. It does not tell you whether the competencies you defined six months ago still match what the role demands today. Businesses change. Role requirements shift. A rubric that was well-calibrated in January can become misleading by October if nobody has reviewed it.

The same principle applies to AI tools. The efficiency gains from AI-assisted assessment are real and significant. But AI screening speeds and risks are two sides of the same coin. An AI tool trained on historical hiring data will reflect the biases of those historical decisions unless you audit it aggressively. “The model said so” is not an acceptable explanation for a hiring decision, legally or ethically.

True hiring success also depends on knowing when to override the structure. Sometimes a candidate scores a 3 on a technical rubric but demonstrates exceptional learning agility and problem-solving instinct during discussion. A rigid, score-only process would miss that person. The structure should inform your decision, not replace your judgment.

Our perspective at testask is that the best-performing talent teams treat their assessment process like a product. They launch it, measure it, gather feedback, and iterate. They use AI talent matching trends to stay current, but they never stop asking whether their tools and frameworks are still fit for purpose. That combination of rigor and adaptability is where excellent hiring really happens.

Transform your hiring with AI-powered recruitment assessments

You now have a clear framework for building and running fair, structured recruitment assessments. The challenge most HR teams face is executing this at scale, across multiple roles, teams, and locations, without sacrificing consistency or quality.

That is exactly what testask is built for. The platform lets you generate tailored test tasks for any role, evaluate candidate submissions with AI-assisted scoring, and collaborate with your hiring team on reviews in one shared workspace. Every step in the assessment workflow is supported: structured task design, rubric-based scoring, audit trails for compliance, and analytics to track performance over time. If you are ready to put smarter hiring with AI into practice, explore testask today and see how it fits your current hiring process.

Frequently asked questions

What are the main steps in an effective recruitment assessment process?

The key steps are defining role requirements, building structured assessments with validated rubrics, using appropriate evaluation tools, mitigating bias at every stage, and auditing results for fairness. Structured interviews with predefined rubrics are a core component because they ensure consistency and reduce evaluator subjectivity across all candidates.

How does AI fit into recruitment assessments?

AI can significantly speed up candidate screening and scoring, especially at high volume, but it requires regular bias audits, explainable scoring outputs, and human oversight for final decisions. AI screening risks bias from training data, so transparency and auditability are non-negotiable requirements for any AI tool used in hiring.

What is the EEOC four-fifths rule in hiring assessments?

The EEOC four-fifths rule is a legal guideline used to identify adverse impact: if one group’s selection rate is below 80% of the highest-selected group’s rate, the assessment may be discriminatory. Applying it alongside AI audit protocols helps organizations catch and correct unfair screening patterns before they create legal exposure.

How often should recruitment assessment processes be reviewed?

Processes should be reviewed at least once per year and after any major hiring campaign, significant role change, or update to business strategy to ensure the criteria still reflect actual job demands and remain legally compliant.

What are the risks of unstructured recruitment assessments?

Unstructured assessments are significantly less predictive of job performance, increase the risk of bias and inconsistent decisions, and are harder to defend if hiring outcomes are legally challenged. Structured methods improve prediction by 34% over unstructured interviews, making the investment in structure one of the clearest wins available to any HR team.

Recommended

- Build an effective recruitment checklist for HR success | Testask Blog | testask

- Candidate Screening Process Guide: Streamlined Hiring Steps | Testask Blog | testask

- Employment assessment best practices that elevate hiring results | Testask Blog | testask

- AI candidate screening: Methods, pitfalls, and best practices | Testask Blog | testask