AI recruitment: faster, smarter hiring for HR leaders

AI recruitment: faster, smarter hiring for HR leaders

Unilever cut its time-to-hire by 90% using AI, saving over £1 million in costs and boosting workforce diversity by 16%. That result is not a fluke or a tech experiment. It signals a fundamental shift in how large organizations compete for talent. AI recruitment combines machine learning, predictive analytics, and automated evaluation tools to transform every stage of the hiring process. This guide breaks down what AI recruitment actually is, how enterprise teams are using it today, where the risks lie, and what governance steps your HR team must put in place before scaling these tools across your organization.

Table of Contents

- Understanding AI recruitment: What it is and how it works

- AI recruitment in action: Enterprise case studies and outcomes

- The pros and cons of AI recruitment

- Governance, regulations, and best practices for AI recruitment

- What most HR leaders miss about AI recruitment

- Explore smarter hiring with AI recruitment assessment tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Faster hiring outcomes | AI recruitment can cut time-to-hire by up to 90%, freeing HR teams to focus on strategic talent management. |

| Improved diversity and satisfaction | AI-driven tools help boost workforce diversity and candidate satisfaction rates by removing early-stage bias. |

| Balanced approach essential | Effective AI-driven hiring requires human oversight, strong governance, and compliance with new laws. |

| Clear pros and cons | AI recruitment offers speed and cost savings but brings risks like bias amplification and regulatory challenges. |

| Practical best practices | Implement audits, transparency measures, and explainable AI models to maximize benefits and minimize risks. |

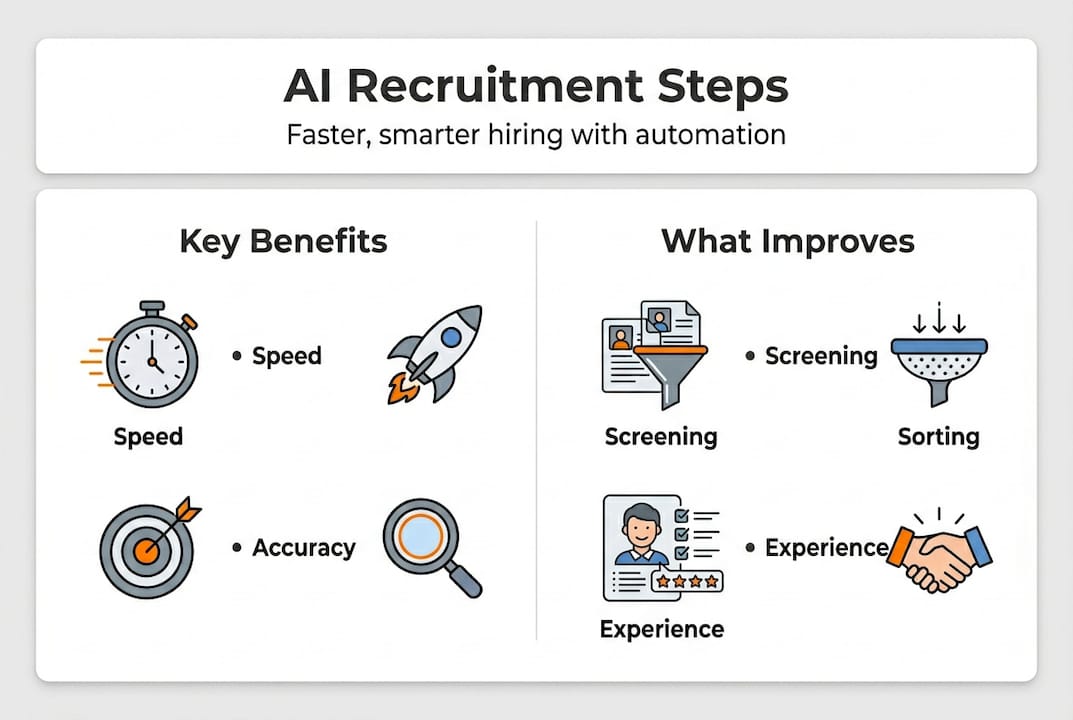

Understanding AI recruitment: What it is and how it works

AI recruitment uses machine learning algorithms and predictive analytics to automate, accelerate, and improve the quality of hiring decisions. It goes well beyond basic applicant tracking. Modern AI recruitment systems analyze structured and unstructured data across thousands of candidate profiles, identify skill matches, flag engagement signals, and even assess communication quality from written responses or video interviews.

At its core, AI recruitment covers three primary functions:

- Screening and filtering: AI scans resumes, cover letters, and application forms to surface the most relevant candidates based on defined criteria, reducing the manual workload on HR teams dramatically.

- Talent matching: Algorithms compare candidate attributes against role requirements, team profiles, and past hire performance data to generate ranked shortlists with measurable precision.

- Communication and scheduling: Automated chatbots and scheduling tools manage candidate outreach, answer frequently asked questions, and coordinate interview logistics without human intervention at each step.

The business case for AI’s role in HR processes is built on measurable outcomes. AI recruitment tools reduce time-to-hire by 50 to 90%, deliver significant cost savings, and improve the objectivity of early candidate screening compared to purely manual review. For HR leaders managing high-volume pipelines, these numbers represent a genuine operational transformation.

The technology also expands what HR can assess at scale. Improving candidate screening no longer means simply reading more resumes faster. It means using structured assessment data and AI-assisted scoring to evaluate skill demonstration, not just credential presentation. That shift changes the quality of your shortlist fundamentally.

Pro Tip: When evaluating AI recruitment tools, ask vendors specifically how their matching algorithm defines “qualified.” Tools that match purely on keyword overlap will produce different, often weaker, results than those using skills-based or predictive performance models. The difference shows up in your 90-day retention rates.

AI talent matching has also evolved to support internal mobility, helping HR leaders identify existing employees who are strong candidates for newly opened roles. This reduces external hiring costs and improves retention by giving current staff clear growth pathways.

AI recruitment in action: Enterprise case studies and outcomes

Understanding the theory is one thing. Seeing AI recruitment’s results in real enterprise environments is more persuasive. The evidence across Fortune 500 companies and large global organizations is consistent: AI-powered hiring delivers dramatic, measurable improvements across speed, cost, and workforce quality.

Unilever’s transformation is the most cited example for good reason. The company deployed AI-powered video interviews and game-based assessments across its early-stage screening process. The results were significant. Unilever’s AI process saved 50,000 hours annually, boosted candidate satisfaction to 92%, and improved workforce diversity by 16%. These were not marginal improvements. They represented a complete redesign of how talent enters the organization.

Here is a summary of enterprise AI recruitment outcomes drawn from documented case studies:

| Company / Sector | AI Application | Key Outcome |

|---|---|---|

| Unilever (FMCG) | Video interviews, game-based assessments | 90% faster time-to-hire, 16% diversity gain |

| Global Financial Services | Resume screening automation | 75% reduction in screening time |

| Large Tech Firm | Predictive matching + skills assessments | 40% improvement in 1-year retention |

| Healthcare System | Automated scheduling + chatbot screening | 60% drop in recruiter workload |

The pattern across sectors is clear. Organizations that combine AI screening with structured skills assessments see the strongest outcomes, not just in speed, but in the quality and diversity of hires.

Looking specifically at candidate experience, AI-powered processes tend to deliver faster response times, more consistent communication, and reduced bias in early filtering. Candidates receive feedback sooner, spend less time in ambiguous waiting periods, and engage with more relevant assessments. That matters because top candidates evaluate your hiring process as a signal of how your organization operates day-to-day.

Following assessment best practices in AI-powered hiring means ensuring that the automated evaluation components you deploy are validated, role-relevant, and tested for adverse impact before scaling. Deploying an assessment that hasn’t been validated for your specific roles introduces legal and performance risk.

The AI’s impact on HR extends beyond individual company results. At the industry level, AI recruitment is shifting competitive dynamics. Organizations that move faster, assess more accurately, and engage candidates more effectively win access to talent pools that slower competitors simply cannot reach. In high-demand skill areas like data engineering, cybersecurity, and product management, that speed advantage translates directly into business performance.

Streamlining candidate evaluation at scale is where AI delivers its clearest return. When your team can assess 500 candidates with the same consistency and rigor you’d apply to 50, you stop making compromises. That’s a fundamentally different operating model.

The pros and cons of AI recruitment

These outcomes highlight AI’s powerful impact, but understanding its benefits and risks is essential for effective adoption. AI recruitment is not a universally positive force. It creates genuine advantages and introduces real risks that HR leaders must manage deliberately.

The strengths and weaknesses of AI in hiring are well documented:

| Dimension | Pros | Cons |

|---|---|---|

| Speed | Cuts time-to-hire by 50-90% | Can rush decisions if oversight is weak |

| Cost | Reduces recruiter hours and agency spend | Implementation and auditing costs are significant |

| Bias | Removes subjective human bias in screening | Can amplify historical bias embedded in training data |

| Candidate experience | Faster responses, consistent communication | Some candidates feel dehumanized by automated screening |

| Regulatory | Enables consistent, auditable processes | Requires ongoing compliance with evolving regulations |

| Prediction | Better performance predictions at scale | Predictive models can fail on causal fairness grounds |

The bias issue deserves specific attention. AI systems trained on historical hire data will replicate the patterns in that data, including discriminatory ones. If your organization historically hired fewer women into engineering roles, an AI trained on past success profiles may downrank female candidates without any human ever making that decision consciously. That’s not a theoretical risk. It has happened at multiple large organizations and resulted in significant reputational and legal damage.

The modern HR role in tech hiring environments requires HR leaders to think like risk managers, not just process optimizers. Here are four numbered steps for responsible AI recruitment adoption:

- Audit your training data before deployment. Understand what historical decisions the algorithm is learning from and whether those decisions reflect the workforce you want to build.

- Run parallel testing to compare AI shortlists against human shortlists on demographic diversity before going live at scale.

- Establish a review layer where human recruiters evaluate a sample of AI-rejected candidates regularly to catch systematic filtering errors.

- Document your process clearly so that any candidate who wants to understand how they were evaluated can receive a meaningful explanation.

The candidate screening process must be designed with both speed and fairness in mind. These goals are not mutually exclusive, but achieving both requires intentional design rather than default automation settings.

“AI in recruitment is not a fairness solution. It is a speed solution that can become a fairness tool only when governed correctly. The technology amplifies whatever values are built into its design. Build in fairness intentionally, or it simply won’t be there.”

Understanding what a job assessment should measure before selecting an AI tool is foundational. Tools that assess candidates against vague or poorly defined criteria will produce fast results that point in the wrong direction.

Governance, regulations, and best practices for AI recruitment

Having weighed the pros and cons, responsible implementation hinges on strong governance and compliance. The regulatory environment for AI recruitment is evolving rapidly, and HR leaders who ignore it are creating significant organizational exposure.

Two frameworks are currently setting the standard:

- NYC Local Law 144 requires employers using automated employment decision tools in New York City to conduct annual bias audits conducted by independent third parties and to notify candidates that such tools are being used. Penalties for non-compliance are real and escalating.

- The EU AI Act classifies recruitment AI as high-risk, requiring transparency, human oversight, documented risk assessments, and ongoing monitoring. Organizations operating in EU markets or hiring EU-based employees must plan for compliance now, not after deployment.

Governance in AI recruitment is essential and requires bias testing, explainability in algorithms, human oversight mechanisms, and audit trails that satisfy regulatory requirements. These are not optional add-ons. They are baseline operational requirements for any organization scaling AI-powered hiring.

Best practices for responsible AI recruitment governance include:

- Bias audits on a defined schedule. Annual audits are the regulatory minimum. Best-practice organizations conduct them quarterly.

- Explainability standards. Every AI-generated score or ranking should be traceable to specific input data. Black-box scoring models create both fairness and legal risk.

- Human override protocols. Define clearly when and how human recruiters can override AI recommendations, and track those decisions over time to identify patterns.

- Candidate disclosure. Proactively inform candidates when AI tools are used in their evaluation and provide a contact point for questions or appeals.

- Vendor accountability. Contract language with AI recruitment vendors should require them to disclose training data sources, update bias audit results, and notify you of model updates that affect outputs.

The disruption in HR by AI is not slowing down. Organizations that build governance infrastructure now will scale faster and more safely than those that react to regulation after deployment.

Pro Tip: Build your AI recruitment governance documentation into your standard HR policy framework. This makes it easier to update as regulations evolve and demonstrates to regulators and candidates alike that governance is embedded in your process, not bolted on as an afterthought. Regulators consistently treat documented, proactive compliance more favorably than reactive responses.

Your screening process guide should be updated before any AI tool goes live to reflect new decision points, human oversight checkpoints, and candidate communication requirements.

What most HR leaders miss about AI recruitment

With practical guidance in place, it’s vital to understand the deeper pitfalls and often-overlooked truths of AI-powered hiring. Most HR teams approach AI recruitment as a fairness upgrade layered on top of a speed upgrade. They assume that removing human bias from screening automatically produces more equitable outcomes. That assumption is wrong, and it’s causing real harm at organizations that haven’t examined it carefully.

Predictive AI fails on causal fairness because correlational models identify patterns in historical data without understanding the causes of those patterns. An AI might learn that candidates who graduated from specific universities performed well in your organization. But that correlation may reflect your organization’s cultural preferences, not those candidates’ actual ability. The algorithm encodes your bias more efficiently than any human recruiter could.

“The uncomfortable truth is that AI recruitment tools are not more objective than humans. They are more consistent. Consistent bias at scale is not an improvement over inconsistent bias. It’s a bigger problem.”

The distinction matters enormously for HR leaders reviewing talent acquisition tips for 2026 and beyond. The organizations that are seeing the strongest long-term results from AI recruitment are not the ones that automated fastest. They are the ones that used AI to handle high-volume, structured tasks while investing more human attention in judgment-heavy decisions. That combination produces better shortlists and better hires.

Human oversight is not a compliance checkbox. It is the mechanism through which your organization’s values and judgment get applied to decisions that affect people’s careers and livelihoods. AI tools can accelerate that process and remove certain categories of error. They cannot replace the institutional knowledge, contextual judgment, and ethical accountability that experienced HR professionals bring to hiring.

Real progress in AI recruitment comes from integration, not replacement. The most effective HR teams treat AI as a powerful tool that extends their capacity without substituting for their expertise. That framing changes how you evaluate tools, how you train your team to work alongside them, and how you communicate with candidates about the process.

Explore smarter hiring with AI recruitment assessment tools

The guidance in this article moves from understanding AI recruitment to applying it responsibly across your organization. Putting that into practice requires tools that match your operational needs and governance requirements.

Testask is an AI-powered recruitment assessment platform built for HR leaders who need to assess candidates at scale without sacrificing evaluation quality. You can generate tailored test tasks aligned to specific roles, review AI-assisted scoring of candidate submissions, and collaborate with hiring managers within a single workflow. The platform supports faster screening decisions while maintaining the structured, auditable evaluation process that governance frameworks require. Explore the AI recruitment blog for practical guides, assessment templates, and best practices that you can apply directly to your hiring process. Your next high-quality hire starts with a better assessment process.

Frequently asked questions

What is AI recruitment in simple terms?

AI recruitment uses artificial intelligence to automate and enhance hiring tasks such as screening candidates, matching skill sets, and reducing manual workload. These tools reduce time-to-hire by 50 to 90% while improving screening consistency across high-volume pipelines.

How can AI help HR teams hire faster?

By automating resume screening and candidate assessments, AI eliminates the manual bottlenecks that slow down early-stage filtering. Unilever’s AI-powered process saved 50,000 hours annually and cut time-to-hire by 90%, demonstrating the scale of acceleration that’s achievable.

What are the risks of using AI in recruitment?

AI can amplify historical bias, erode candidate trust if the process feels impersonal, and expose organizations to regulatory penalties without proper governance. Audits and transparency are essential safeguards against these risks.

How do regulations like NYC Law 144 and the EU AI Act affect AI recruitment?

These regulations require regular audits, bias testing, and transparency in AI-powered hiring systems to ensure legal compliance. Organizations using automated employment decision tools must notify candidates and document their governance processes clearly.