What is job assessment? A practical guide for better hiring

What is job assessment? A practical guide for better hiring

What is job assessment? A practical guide for better hiring

Most hiring teams still treat the resume as their primary filter, yet pre-hire assessments tell a very different story: 78% of employers who use them report improved hire quality, and 23% see measurable gains in workforce diversity. That gap between traditional practice and evidence-backed results is costing organizations real money in bad hires, high turnover, and missed talent. This guide breaks down exactly what job assessment is, how it works, which models fit which situations, and what compliance demands you need to understand before deploying any evaluation tool at scale.

Table of Contents

- Defining job assessment: Core concepts and history

- Major types of job assessments explained

- Models for using assessments: Compensatory vs. multiple-hurdle

- Ensuring fairness and legal compliance in job assessment

- A fresh perspective: Why traditional hiring misses the mark

- How smarter tools can upgrade your assessments

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Skills over resumes | Skills-based assessments deliver better hiring results than relying solely on education or experience. |

| Choose the right model | Compensatory and multiple-hurdle approaches suit different hiring needs and should be selected based on the role. |

| Stay compliant | Fairness, validation, and legal compliance are essential for effective and equitable job assessments. |

| Leverage smart tools | Advanced platforms simplify complex assessment strategies, improving quality and reducing bias. |

Defining job assessment: Core concepts and history

With the common misconceptions addressed, let’s examine what job assessment truly means for HR professionals and hiring managers.

At its core, job assessment is a structured process of gathering objective, job-relevant data about candidates to predict their likely success in a specific role. It goes well beyond scanning a resume for years of experience or a degree from a recognizable school. Instead, it asks: can this person actually do the work?

Historically, most organizations leaned on two signals: education credentials and prior job titles. Those signals felt safe and familiar, but research has consistently shown they are weak predictors of on-the-job performance. The shift toward skills-based hiring gained real momentum in the early 2000s, as industrial-organizational psychology research accumulated enough evidence to show that structured, standardized tools outperform gut instinct by significant margins.

Today, job assessment spans a wide range of methodologies, each measuring a different dimension of a candidate’s potential:

- Skills-based assessments: Job-specific tasks that test whether a candidate can perform the actual work, such as a coding challenge for a developer or a writing sample for a content strategist.

- Cognitive ability tests: Measures of general mental ability, reasoning speed, and problem-solving capacity.

- Personality assessments: Evaluations of behavioral traits, working style, and cultural fit using validated frameworks like the Big Five model.

- Situational judgment tests (SJTs): Scenarios presenting realistic workplace dilemmas, measuring decision-making and judgment under conditions that mirror the job.

- Structured interviews: Standardized question sets scored against predefined rubrics to minimize interviewer bias.

- Job simulations: Realistic job previews or work sample exercises that replicate core tasks a candidate would encounter on day one.

“Primary methodologies include skills-based (job-specific tasks), cognitive ability (GMA, reasoning), personality (traits, culture fit), situational judgment (decision-making), structured interviews, and job simulations.” — Candidate Assessment Frameworks

Each method comes with its own validity data, administration requirements, and appropriate use cases. No single tool covers everything, which is why most high-performing talent teams build a blended approach. The goal is always the same: generate reliable, defensible signals that predict job success without introducing unnecessary bias into the process.

Job assessment also directly addresses the diversity challenge. Resumes carry significant socioeconomic bias because education prestige and prior employer brand are heavily influenced by factors outside a candidate’s control. Standardized, validated assessments level that playing field when designed and deployed correctly.

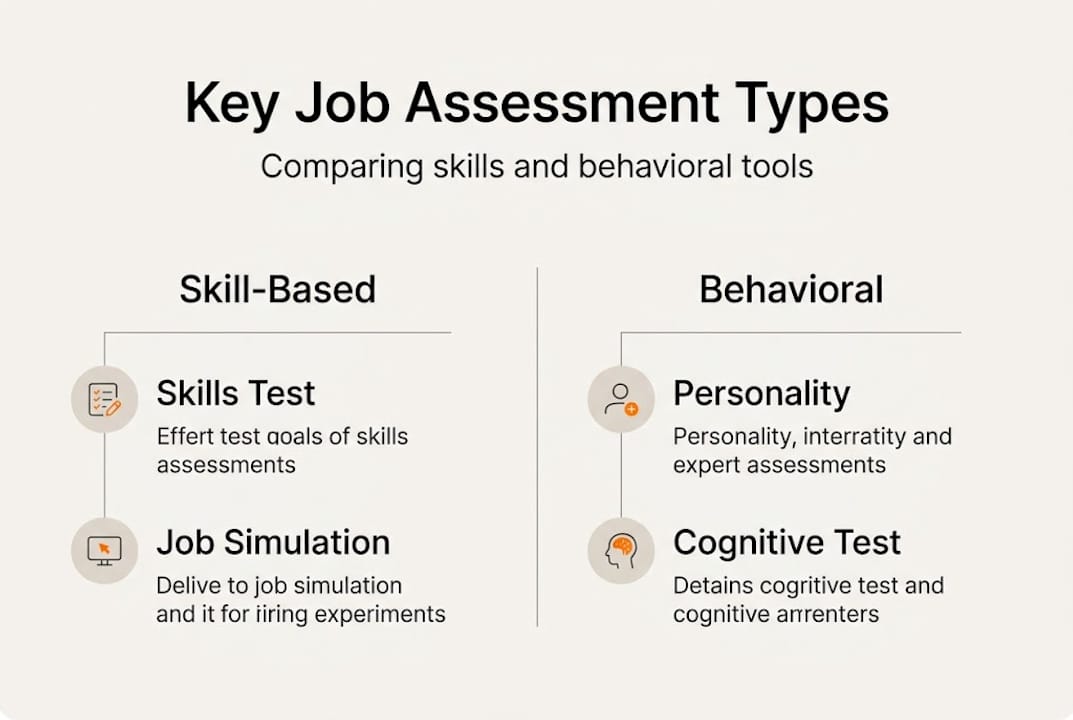

Major types of job assessments explained

Having defined what job assessment is, let’s break down the main types used by today’s employers and identify exactly where each one earns its place in your hiring process.

1. Skills-based assessments These are the most direct measure of job readiness. A candidate applying for a data analyst role might complete a case study using a real dataset. A customer success candidate might handle a mock escalation call. Skills-based tools work because they measure the actual behavior the job requires. Education and experience are poor predictors of performance, with validity correlations of only r=0.10 to r=0.18, while skills-based hiring rises as a more reliable alternative.

2. Cognitive ability tests General mental ability (GMA) tests remain among the strongest single predictors of job performance across roles and industries. They measure reasoning, pattern recognition, verbal comprehension, and numerical logic. The tradeoff is that these tests also carry some of the highest adverse impact risks, particularly for certain racial and ethnic groups, so they should always be paired with a job analysis to confirm relevance.

3. Personality assessments Think of these as behavioral predictions rather than psychological profiles. Well-validated instruments measure traits like conscientiousness, openness, emotional stability, and agreeableness. Conscientiousness, in particular, is a robust predictor of performance across almost every job category. Personality data becomes most valuable when interpreted alongside skills data rather than used in isolation.

4. Situational judgment tests SJTs present candidates with realistic scenarios and ask how they would respond. They’re particularly effective for leadership roles, customer-facing positions, and jobs requiring complex ethical judgment. Because scenarios can be built directly from critical incident analyses of the actual role, they carry strong face validity and are generally well-received by candidates.

5. Structured vs. unstructured interviews This distinction matters enormously. Structured interviews outperform unstructured ones with validity correlations of r=0.51 versus r=0.38. Structured formats use consistent questions, scored against anchored rating scales, eliminating the “I just liked them” effect that drives so many poor hiring decisions.

6. Job simulations Work sample tests and simulations are high-fidelity, meaning they closely mirror the actual job. They tend to have both high validity and strong candidate acceptance because applicants can see the direct relevance. The tradeoff is development time and cost, making them most practical for high-volume or high-stakes roles.

Pro Tip: Combining AI-powered assessment methods with structured interviews creates a layered signal set that dramatically reduces both bias and variance in your final hire decisions.

| Assessment type | Best use case | Key pitfall |

|---|---|---|

| Skills-based tasks | Technical and creative roles | Time-intensive to build |

| Cognitive ability | High-complexity, fast-learning roles | Adverse impact risk |

| Personality | Culture fit and leadership pipeline | Easy to fake if not adaptive |

| Situational judgment | Customer-facing and management | Requires role-specific scenarios |

| Structured interviews | All roles, especially senior | Requires interviewer training |

| Job simulations | High-volume or mission-critical roles | High development cost |

Models for using assessments: Compensatory vs. multiple-hurdle

Knowing which assessment types exist is only half the job. The other half is deciding how you make decisions with the data those tools generate. Two primary models dominate professional practice.

The compensatory model allows a strong score in one area to offset a weaker score in another. Picture a composite scorecard where a candidate’s exceptional cognitive ability compensates for moderate scores on a personality dimension. This model is flexible and considers the whole candidate, making it well-suited for roles where different combinations of strengths can lead to success. It works particularly well for knowledge-worker roles with complex, varied responsibilities.

The multiple-hurdle model requires candidates to meet a minimum threshold at each sequential stage before advancing. Fail the skills screen, and you don’t move to the structured interview regardless of your other scores. Multiple-hurdle models are efficient for high-volume pipelines and essential for safety-sensitive roles where a weakness in any critical area is genuinely disqualifying.

Composite models sit between these two approaches, combining scores from multiple assessment tools into a single weighted index. The weighting should be determined by a formal job analysis, which identifies which competencies are most critical to the role’s success. SIOP research consistently emphasizes that composite models built on rigorous job analysis produce the strongest predictive validity.

Pro Tip: Before choosing a model, map your role’s non-negotiables. If there are true deal-breakers (specific certifications, minimum safety qualifications), start with a multiple-hurdle gate for those, then use a compensatory composite for everything else. This structured hiring process approach gives you both efficiency and nuance.

| Model | Pros | Cons | Best for |

|---|---|---|---|

| Compensatory | Considers whole candidate, flexible | Can mask critical weaknesses | Knowledge work, creative roles |

| Multiple-hurdle | Efficient, filters fast | May screen out high-potential candidates | High-volume, safety-sensitive roles |

| Composite | Highest validity when well-weighted | Requires formal job analysis | Complex professional roles |

One practical reality: most mid-sized to large organizations benefit from a hybrid. Use multiple-hurdle gates early in the funnel to manage volume, then apply a compensatory composite for finalists where nuance matters most. That sequencing keeps your time-to-hire manageable without sacrificing decision quality at the critical final stage.

Ensuring fairness and legal compliance in job assessment

Adopting sophisticated assessments means tackling fairness and compliance directly. This is not optional territory. A poorly validated assessment can expose your organization to significant legal and reputational risk.

The two primary legal frameworks in the U.S. that govern pre-employment testing are Title VII of the Civil Rights Act and the Americans with Disabilities Act (ADA). Under Title VII, any assessment that produces a disparate impact on a protected group must be justified by business necessity and job-relatedness. The 4/5ths rule (also called the 80% rule) is the standard benchmark: if the pass rate for a protected group is less than 80% of the pass rate for the highest-scoring group, you may have a compliance problem.

Cognitive ability tests, while highly valid, carry the highest adverse impact risk. AI and algorithmic tools also risk disparate impact under Title VII and the ADA, even when the vendor supplied the tool. You cannot outsource legal liability to a vendor. If your organization uses any AI-powered screening, scoring, or ranking tool, you are responsible for its outcomes.

“Cognitive tests have high validity but adverse impact ratios can be less than 0.80 for protected groups. AI and algorithmic tools risk disparate impact under Title VII and ADA even if vendor-provided.” — Adverse Impact and Hiring Standards

Practical compliance steps for your job assessment compliance program include:

- Conduct a formal job analysis before selecting or building any assessment. Document which competencies are essential to the role.

- Validate job-relatedness of every tool you deploy. Content validity, criterion validity, and construct validity each serve a different evidentiary purpose.

- Monitor adverse impact data continuously. Track pass rates by race, gender, age, and disability status at each screening stage, not just at the final hire decision.

- Provide reasonable accommodations under the ADA for candidates who need extended time, alternative formats, or other modifications.

- Audit AI tools regularly. Request disparity data from vendors and conduct your own internal analyses annually.

- Document everything. Maintain records of your job analysis, validation studies, score distributions, and accommodation decisions.

The compliance workload is real, but it also produces better assessments. A tool that is genuinely job-relevant, fairly designed, and rigorously validated is also a more accurate predictor of performance. Fairness and quality are not in opposition here. They reinforce each other.

A fresh perspective: Why traditional hiring misses the mark

Beyond compliance, most hiring processes have a deeper problem that even experienced HR leaders often overlook: they optimize for familiarity, not potential.

A resume from a prestigious university or a recognizable employer creates a cognitive shortcut. The hiring manager thinks, “Someone else already vetted this person.” But that shortcut bakes in every bias those prior gatekeepers held. It also systematically undervalues candidates who took non-traditional paths, changed industries, or came from under-resourced backgrounds.

The evidence against credential-first hiring is striking. 36% of employers using skills assessments advance high-scoring candidates who lack traditional experience. Those candidates go on to perform at the same level or better than credentialed peers. Meanwhile, unstructured interviews, still used by the majority of organizations, have significantly weaker predictive validity than structured methods and biodata tools.

What structured, evidence-based assessment actually unlocks is access to the skills vs. experience debate from a position of real data rather than assumption. You stop asking, “Does this person look like our best performers?” and start asking, “Can this person do what our best performers do?” That shift improves quality, accelerates diversity outcomes, and builds pipelines that actually hold up over time. It also tends to improve retention because candidates hired for genuine fit, rather than surface-level similarity, tend to stay longer and grow faster.

How smarter tools can upgrade your assessments

To put these insights into practice, consider leveraging smarter assessment technology that handles the complexity for you.

Modern platforms like testask are built specifically for HR teams and hiring managers who need to move fast without sacrificing rigor. testask lets you generate tailored test tasks for any role in minutes, evaluate candidate submissions with AI-assisted analysis, and collaborate with your team on reviews inside a single workflow. You get structured, evidence-based assessment data without the overhead of building everything from scratch. Whether you’re managing a high-volume pipeline or filling a senior leadership role, testask gives you the tools to apply the models and compliance practices covered in this guide at scale.

Frequently asked questions

How does job assessment differ from traditional interviews?

Job assessment uses structured, evidence-based tools to evaluate measurable skills and behaviors, while traditional interviews often rely on subjective impressions. Structured interviews outperform unstructured ones with validity correlations of r=0.51 versus r=0.38.

What are the risks of using AI in job assessments?

AI tools can introduce bias or produce adverse impact outcomes even when the tool was built by a third-party vendor, making ongoing validation and monitoring essential. Algorithmic tools carry disparate impact risk under Title VII and the ADA regardless of who built them.

Which job assessment method is best for quality of hire?

Skills-based assessments and structured interviews consistently produce better hiring outcomes than resumes or education credentials alone. Education and experience show validity correlations of only r=0.10 to r=0.18, far below structured tools.

How can job assessments improve diversity?

Validated assessments evaluate candidates on job-relevant skills rather than credentials that carry socioeconomic bias, opening the door to a broader, more qualified talent pool. Pre-hire assessments have led 23% of employers to report measurable diversity improvements.