Pre-employment tests: A step-by-step hiring guide

Pre-employment tests: A step-by-step hiring guide

You spend three weeks reviewing resumes, scheduling interviews, and making a confident offer. Then, two months in, it becomes clear the new hire can’t do the job. That scenario costs your team far more than time. Bad hires can set back projects, damage team morale, and run up real financial losses. Pre-employment testing is one of the most reliable tools available to stop that cycle before it starts. This guide walks you through building, administering, and evaluating effective pre-employment tests so your hiring decisions are grounded in data, not just gut instinct.

Table of Contents

- Why use pre-employment testing: Solving the hiring challenge

- Preparing for implementation: Requirements, tools, and compliance

- Building your screening process: Steps for effective pre-employment tests

- Troubleshooting and optimizing: Common mistakes and advanced strategies

- Perspective: Why most pre-employment test strategies miss the mark

- Next steps: Implementing smarter hiring with Testask

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Job-relevance matters | Tests must be tied to actual job requirements to avoid legal or ethical missteps. |

| Consistent administration | Every candidate should take tests under the same conditions for fairness. |

| Balance testing methods | Use multiple test types and weigh scores by predictive validity, not just gut feel. |

| Watch for bias | Monitor for adverse impact and cultural bias to improve diversity and reduce risk. |

| Accommodate disabilities | Ensure testing procedures are accessible and adjusted for all candidates with disabilities. |

Why use pre-employment testing: Solving the hiring challenge

Resumes and interviews tell part of the story. They don’t always tell the right part. A candidate can present well in an interview and still lack the core skills needed for the role. Pre-employment tests close that gap by giving you structured, measurable data on what candidates can actually do.

Common test types used in hiring:

- Cognitive ability tests measure general mental ability, reasoning, and problem-solving

- Skills assessments evaluate job-specific technical competencies

- Personality inventories assess behavioral traits and working style

- Situational judgment tests present realistic work scenarios to gauge decision-making

- Work sample tests require candidates to complete tasks directly relevant to the role

The predictive power of these tools varies. General mental ability (GMA) tests, for example, show a validity correlation of r=0.51, making them one of the strongest single predictors of job performance available. By contrast, years of experience carries only r=0.18 as a predictor, which means defaulting to tenure as a proxy for skill is statistically weak.

Following assessment best practices ensures your tests actually predict what you need them to predict. If you’re just getting started, reviewing job assessment basics can help you understand the foundation.

| Test type | Predictive validity ® | Best use case |

|---|---|---|

| GMA / Cognitive | 0.51 | Complex roles, fast learning required |

| Work sample tests | 0.54 | Technical and skilled trades roles |

| Structured interviews | 0.51 | Culture fit and communication |

| Personality inventories | 0.38 | Behavioral and team-fit screening |

| Years of experience | 0.18 | General screening only |

Potential pitfalls to watch for:

- Bias and adverse impact if tests aren’t validated for your specific population

- Over-reliance on a single test type, which narrows your view of a candidate

- Faking on personality assessments (research indicates 38% of candidates engage in impression management)

- Cultural bias embedded in test design, which can disadvantage qualified candidates

- ADA compliance failures when no accommodations are offered

According to SHRM’s Pre-Employment Testing Checklist, pre-employment tests must be job-related, validated for predicting job performance, administered consistently to all candidates under the same conditions, and used as one part of the selection process, not solely for decisions.

Pro Tip: Never make a hiring decision based on a single test score. Use test results as one input among several, including structured interviews and work samples.

With the clear impact established, let’s review what you need before setting up your own test process.

Preparing for implementation: Requirements, tools, and compliance

Launching a pre-employment testing program without preparation leads to legal exposure and unreliable results. Getting the groundwork right before you administer a single test protects your organization and makes your data trustworthy.

Legal requirements you need to address first:

- Job-relatedness: Every test you use must directly connect to the skills and responsibilities in the job description

- Consistent administration: All candidates for the same role must take the same test under equivalent conditions

- ADA accommodations: You must provide reasonable accommodations for candidates with disabilities, such as extended time or alternative formats

- Informed consent: Candidates should know what tests they’re taking, why, and how results will be used

- Data privacy: Test results must be stored securely and separately from general personnel files

Medical exams and drug tests carry additional rules. Medical exams must be post-offer only and job-related, with results kept confidential in files separate from personnel records. Drug tests require documented consent, a written policy outlining procedures and consequences, and full compliance with applicable state and federal laws, which vary significantly by jurisdiction.

“The goal of pre-employment testing is not to screen people out. It’s to find the best fit for the role while creating a fair and defensible process.” This distinction matters when selecting tools and communicating with candidates.

Choosing the right testing platform:

Your platform should support consistent delivery, automated scoring, and secure data storage. Look for tools that offer role-specific test customization, clear reporting dashboards, and built-in accommodation options. A well-built recruitment checklist will include platform selection as a key step before any testing goes live.

| Requirement | What to look for in a platform |

|---|---|

| Test customization | Ability to create or adapt tests by role |

| Consistent delivery | Standardized instructions and timing controls |

| ADA accommodations | Adjustable time limits, accessible formats |

| Reporting and scoring | Automated scoring with comparative analytics |

| Data security | Encrypted storage, role-based access control |

Validating tests locally:

Generic off-the-shelf tests aren’t always accurate for your specific workforce or role context. Local validation means gathering performance data from current employees, comparing it with their original test scores, and confirming the test actually predicts success in your organization. This step takes time but produces results you can defend legally and statistically.

Pro Tip: Before going live with any test, run a pilot with internal candidates or a small applicant group. Use the results to refine scoring thresholds and catch any inconsistencies in how the test is delivered.

Now that requirements and safeguards are clear, let’s explore how to organize and execute a testing process that delivers results.

Building your screening process: Steps for effective pre-employment tests

A reliable pre-employment testing program doesn’t happen by chance. It follows a structured process from role definition through final evaluation. Here’s how to build one that works.

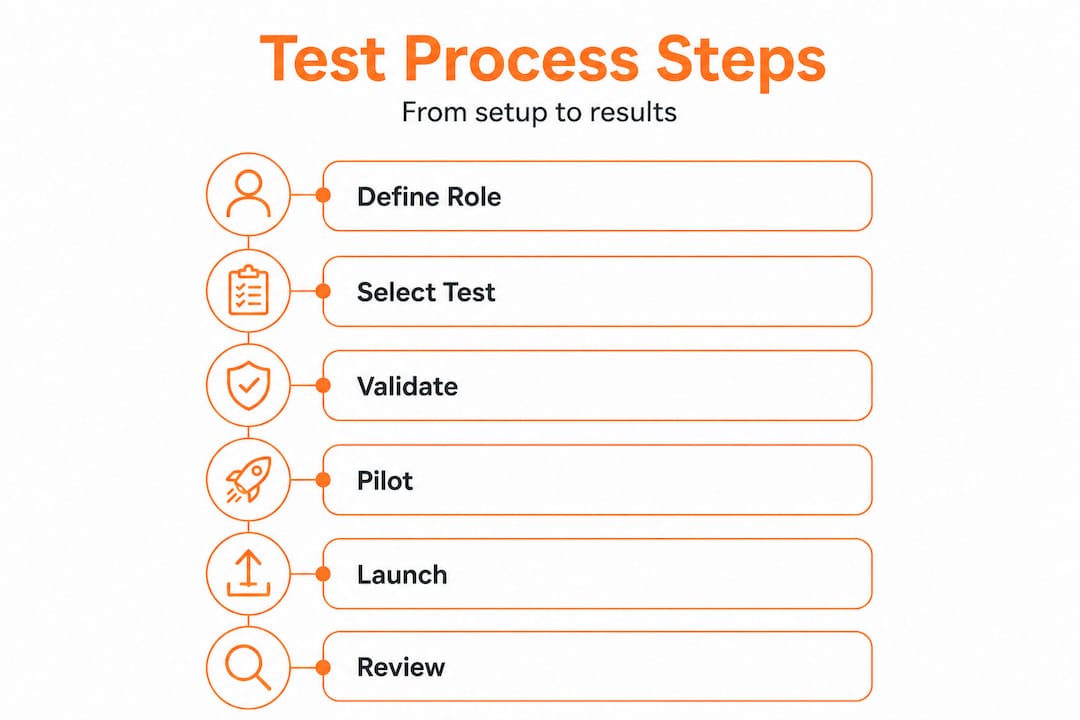

Step-by-step framework:

-

Conduct a job analysis. Identify the core competencies, knowledge areas, and behaviors required for success in the role. Talk to current high performers and their managers. Review the job description critically.

-

Select tests matched to competencies. Choose test types that directly measure the competencies identified. For a data analyst role, cognitive ability and technical skills tests are most relevant. For a customer service role, situational judgment and communication assessments may rank higher.

-

Choose a multi-hurdle or battery approach. A multi-hurdle process eliminates candidates who don’t meet a minimum threshold on one test before advancing to the next. This saves time and resources. A battery approach administers several tests at once and combines scores. Each has trade-offs depending on your volume and timeline.

-

Set normative or predictive benchmarks. Benchmark scores normatively or predictively to make sense of results. Normative benchmarking compares a candidate’s score against a reference group. Predictive benchmarking ties scores directly to job performance outcomes from your own data.

-

Weight test elements by validity. Not all tests carry equal predictive power. A practical weighting model might assign skills assessments 40% of the final score, cognitive ability 30%, and personality or situational judgment 30%. The HHS Hiring Assessment Strategies framework recommends weighting by validity to ensure the strongest predictors drive the outcome.

-

Administer tests consistently. Every candidate for the role gets the same test under the same conditions. Any deviation introduces bias and weakens your data.

-

Review and calibrate results. Use score distributions to identify cutoffs. Review cases where scores and interview feedback diverge. Investigate the root cause before making a decision.

The candidate screening process benefits enormously from this kind of structured approach. Without it, teams fall back on subjective judgment and inconsistency. For additional techniques on streamlining candidate evaluation, structured scoring frameworks make a measurable difference.

Strategies for avoiding over-testing:

- Limit your battery to three to four assessments maximum per role

- Place your most predictive test first to filter efficiently

- Track candidate completion rates; high drop-off signals the process is too long

- Review total test time; most candidates should finish in 60 to 90 minutes

| Approach | Best for | Trade-off |

|---|---|---|

| Multi-hurdle | High-volume roles, early filtering | Requires clear cutoffs upfront |

| Battery | Comprehensive evaluation, senior roles | Longer for candidates, more data to analyze |

| Single test | Speed, basic screening | Limited predictive power alone |

Pro Tip: Ask your top performers to complete your test battery before you use it with candidates. Their scores give you a performance benchmark grounded in real data.

With the screening process set up, it’s important to recognize and troubleshoot the common mistakes and edge cases that can undermine your results.

Troubleshooting and optimizing: Common mistakes and advanced strategies

Even well-designed testing programs hit problems. Knowing what to watch for keeps your process effective and legally defensible over time.

ADA accommodations done right:

Accommodating candidates with disabilities isn’t optional, and it’s not just about compliance. Accommodation requests should be handled promptly, documented carefully, and applied consistently. Common accommodations include additional time, alternative test formats, screen reader compatibility, and separate testing environments. Failing to accommodate properly creates legal exposure and causes you to lose qualified candidates.

Managing bias and adverse impact:

Bias can enter at any stage: test design, scoring, or interpretation. Adverse impact occurs when a test disproportionately screens out candidates from a protected group at a rate greater than 80% of the top-performing group (the “four-fifths rule”). Monitor your results regularly by demographic group. If you see a pattern, investigate the test design and consult an I/O psychologist (industrial-organizational psychologist).

According to SHRM’s guidance, cultural bias risks are real, and local validation is your best defense. A test built on norms from one workforce may not translate accurately to another.

Detecting faking on personality tests:

Up to 38% of candidates engage in some level of impression management on personality assessments. Some test publishers build in validity scales (built-in inconsistency checks) to detect faking. Look for tests with these features. You can also reduce faking risk by:

- Telling candidates that results will be validated through reference checks or behavioral interviews

- Using forced-choice formats that make it harder to identify the “right” answer

- Combining personality results with work sample tasks that are harder to fake

The broader job assessment guide covers these nuances in detail for teams building more sophisticated programs.

Optimizing your test mix:

The goal is the highest predictive value for the least candidate burden. Data shows that combining cognitive ability with structured interviews and work samples produces stronger predictions than any single test. Use data analytics in hiring to track which test combinations in your own pipeline best correlate with 90-day performance ratings.

| Risk | Prevention strategy |

|---|---|

| Adverse impact | Regular demographic analysis, local validation |

| Faking | Validity scales, forced-choice formats |

| ADA non-compliance | Documented accommodation process, accessible formats |

| Over-testing | Limit battery size, monitor completion rates |

| Legal challenge | Job-relatedness documentation, consistent administration |

AI in recruitment is increasingly being used to flag anomalous score patterns and support bias audits, giving HR teams faster feedback loops than manual review alone.

Pro Tip: Set a calendar reminder to review your testing program every six months. Roles evolve, and tests that were accurate two years ago may no longer reflect what success looks like today.

Perspective: Why most pre-employment test strategies miss the mark

Most articles about pre-employment testing stop at the “use multiple tests” advice. That’s necessary, but it’s not sufficient.

The deeper issue we see consistently is that organizations treat test selection as a one-time event. They pick a vendor, deploy a battery, and move on. Two years later, the tests are still running, the role has changed, and no one has checked whether the scores still predict anything meaningful.

The second common failure is uniform weighting. Teams assign equal scores to every test because it feels fair. But fairness in measurement means weighting by actual predictive value. If your cognitive ability test predicts performance at twice the rate of your personality inventory, treating them equally wastes signal. The science on this is clear. The HHS framework recommends weighting by validity, and organizations that follow this approach consistently make better decisions.

The third issue is the gap between test results and context. A score doesn’t tell you why a candidate answered the way they did. A low situational judgment score might reflect unfamiliarity with your industry’s terminology, not a lack of judgment. Strong programs build in a review step where scores that diverge significantly from interview feedback get a second look before a decision is made.

The organizations that get the most out of pre-employment testing treat it as a continuous system, not a one-time setup. They track hiring decisions through data analytics, connect test scores to downstream performance metrics, and refine their approach based on actual results. That feedback loop is what separates programs that improve over time from those that slowly drift toward irrelevance.

Next steps: Implementing smarter hiring with Testask

Building a rigorous pre-employment testing program requires the right tools behind it. You need a platform that supports test customization, consistent delivery, accommodation workflows, and score analysis at scale.

Testask is an AI-powered assessment platform built specifically for HR teams and hiring managers who need to move faster without sacrificing quality. You can generate tailored test tasks by role, automate candidate evaluation with AI-assisted scoring, and collaborate with your team on reviews in one centralized workspace. Built-in reporting surfaces the patterns in your data so you can optimize your test mix over time. Whether you’re screening for technical skills or behavioral fit, Testask helps you build a defensible, scalable process. Explore platform options and subscription plans to find the right fit for your team’s hiring volume and goals.

Frequently asked questions

What makes a pre-employment test valid and compliant?

A valid and compliant pre-employment test must be job-related, validated for performance prediction, administered the same way for all candidates, and used alongside other selection methods, not as the sole basis for a hiring decision.

How do you prevent bias in pre-employment testing?

Preventing bias requires careful test design, regular monitoring for adverse impact by demographic group, local validation against your own workforce, and accommodations for candidates with disabilities.

Can medical or drug tests be part of the pre-employment assessments?

Yes, but with strict rules. Medical exams must be post-offer and job-related, with results stored separately from personnel files. Drug tests require candidate consent, a written policy, and compliance with state and federal laws.

How should test scores be combined for best hiring results?

Weight scores by predictive validity and benchmark results normatively against a relevant reference group. For example, assigning skills tests 40% and cognitive tests 30% of the final score reflects their relative predictive power and leads to better hiring outcomes.

Recommended

- Candidate Screening Process Guide: Streamlined Hiring Steps | Testask Blog | testask

- Employment assessment best practices that elevate hiring results | Testask Blog | testask

- Streamline candidate evaluation: proven steps for better hiring | Testask Blog | testask

- Build an effective recruitment checklist for HR success | Testask Blog | testask